Have you ever wondered how big tech companies like Netflix, Amazon, or Google update and scale their apps so quickly?

Have you ever asked yourself how the same application can run on different computers without any glitches? Or how large companies scale their systems within minutes?

The answer is simpler than you think — Docker.

Still Not Using Docker in 2025? You’re Paying for It.

If you’re skipping containerization, you’re likely overspending by up to 70% on cloud costs and deploying features 5x slower than your competitors. That’s not just inefficient—it’s a serious business risk.

The average enterprise loses $1.25M every year due to inconsistent, outdated deployment methods.

At Bitcot, our DevOps consulting services help companies slash infrastructure costs by 60% and speed up deployment cycles by 85%—with ROI in as little as 6 weeks.

Docker isn’t optional anymore.

By 2026, 95% of businesses will run containerized apps. The only question: will you lead or lag?

In this blog, we’ll break down how Docker solves major deployment challenges, why waiting is costing you more than you think, and how both tech and non-tech leaders can drive fast ROI with the right DevOps strategy.

What is Docker? Understanding the Container Revolution

At its core, Docker is an open-source platform that packages applications and their dependencies into standardized units called containers. These containers are lightweight, portable, and run consistently across any environment—from a developer’s laptop to test servers to production cloud environments.

Think of Docker containers like shipping containers for code. Just as shipping containers standardized global logistics by providing a consistent unit regardless of contents, Docker containers standardize software deployment by packaging everything an application needs to run into a single, portable unit.

Also Read: Top 10 DevOps Tools to Use in 2025 and Beyond [Best Picks]

Unlike traditional virtual machines that require a full operating system for each instance, Docker containers share the host system’s OS kernel, making them significantly more efficient. This fundamental difference is why Docker has become the foundation of modern cloud computing strategies.

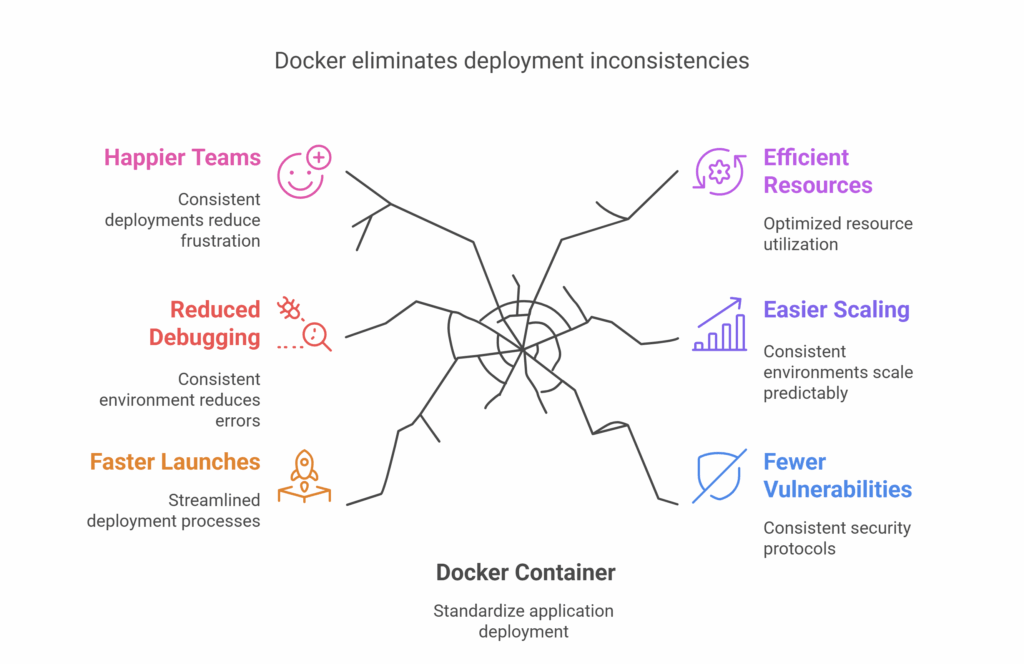

Common Deployment Challenges Docker Solves in 2025

Before containerization technologies like Docker, businesses faced a common nightmare: “It works on my machine” syndrome. Developers would create applications that ran perfectly in their development environment but failed when deployed to testing or production environments.

This inconsistency led to:

- Delayed product launches

- Increased debugging time (and costs)

- Frustrated development teams

- Wasted server resources

- Scaling difficulties

- Security vulnerabilities

If these challenges sound familiar, you’re experiencing what thousands of businesses struggled with before adopting container technology.

Why Virtual Machines and Traditional Deployment Methods Aren’t Enough

The pain deepens when you consider the alternatives. Virtual machines (VMs) were the standard solution before Docker, but they come with significant drawbacks:

VMs require a complete operating system installation for each instance, consuming gigabytes of storage and RAM. This translates to higher cloud computing costs and slower deployment times.

Additionally, managing different development, testing, and production environments becomes increasingly complex as your business grows. Your IT team spends more time solving environment issues than building features that drive revenue.

As businesses accelerate their digital transformation initiatives in 2025, these inefficiencies create competitive disadvantages that can be difficult to overcome. Your competitors who have embraced containerization are likely deploying new features faster and more reliably while reducing their infrastructure costs.

How Docker Containerization Transforms Cloud Computing Efficiency

Docker provides a standardized way to package applications and their dependencies into lightweight, portable containers that run consistently across any environment. Think of containers as standardized shipping boxes that can be moved easily between different ships, trucks, and storage facilities without worrying about what’s inside.

Docker Explained: Core Concepts for Business Leaders

Docker is an open-source platform that automates the deployment, scaling, and management of applications using containerization technology. Unlike VMs, Docker containers share the host system’s OS kernel, making them significantly more lightweight and efficient.

A Docker container includes everything an application needs to run:

- The code

- Runtime

- System tools

- System libraries

- Settings

This encapsulation ensures that your application runs the same way, regardless of where it’s deployed – from a developer’s laptop to your production cloud environment.

Key Components of Docker:

- Docker Engine: The runtime that builds and runs containers

- Docker Images: Templates containing application code and dependencies

- Docker Containers: Running instances of Docker images

- Dockerfile: Text file with instructions to build a Docker image

- Docker Hub: Cloud repository for sharing and accessing Docker images

- Docker Compose: Tool for defining multi-container applications

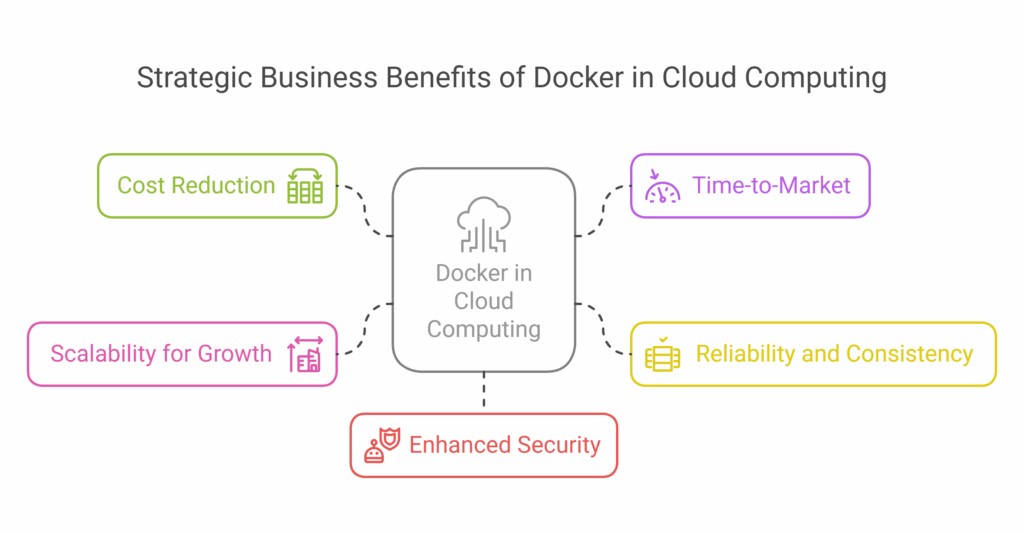

5 Strategic Business Benefits of Docker in Cloud Computing

If you’re a CEO, CTO, or business owner focused on growth and efficiency, Docker offers compelling advantages:

1. Significant Cost Reduction

Docker’s efficiency translates directly to your bottom line. Containers use fewer resources than traditional VMs, allowing you to:

- Run more applications on the same hardware

- Reduce cloud computing costs by 50-70%

- Minimize licensing expenses

- Decrease operational overhead

A 2024 study by Forrester found that enterprises adopting Docker reduced their infrastructure costs by an average of 66% while increasing developer productivity by 43%.

2. Accelerated Time-to-Market

In today’s competitive landscape, speed matters. Docker enables:

- Faster development cycles

- Streamlined CI/CD pipelines

- Reduced environment setup time

- Quicker onboarding for new developers

This acceleration means your business can respond more rapidly to market changes and customer needs, giving you a decisive competitive advantage.

3. Enhanced Reliability and Consistency

Docker’s “build once, run anywhere” philosophy eliminates the root cause of many deployment issues:

- Identical environments across development, testing, and production

- Reduced “works on my machine” problems

- Consistent application behavior across cloud providers

- More reliable updates and rollbacks

This consistency leads to fewer production incidents, higher uptime, and improved customer satisfaction.

4. Improved Scalability for Growth

As your business grows, Docker makes scaling your applications seamless:

- Horizontal scaling by adding more container instances

- Integration with orchestration tools like Kubernetes

- Cloud-agnostic deployment for multi-cloud strategies

- Dynamic resource allocation

These capabilities ensure your infrastructure can grow with your business without requiring major architectural changes.

5. Enhanced Security Through Isolation

Docker’s container architecture provides natural isolation between applications:

- Reduced attack surface

- Better control over dependencies

- Simplified security patching

- Improved compliance management

In an era of increasing cyber threats, this security advantage can’t be overstated.

Docker Integration with Kubernetes and Modern Cloud Architecture

In 2025, Docker has become the foundation of modern cloud infrastructure, working seamlessly with:

- Kubernetes: For orchestrating large-scale container deployments

- Microservices Architecture: Breaking applications into manageable, independent services

- Serverless Computing: Supporting function-as-a-service implementations

- DevOps Practices: Enabling continuous integration and deployment

- Multi-Cloud Strategies: Providing consistency across different providers

Also Read: 15 Best CI/CD Tools Every Business Needs: A 2025 Guide

The synergy between Docker and these technologies creates a flexible, resilient, and efficient foundation for digital business.

Implementing Docker with Bitcot: Client Success Stories and ROI

At Bitcot, we’ve helped numerous businesses transform their operations with Docker-based solutions. Our approach includes:

- Assessing your current infrastructure and application needs

- Creating a containerization strategy tailored to your business goals

- Implementing Docker alongside complementary technologies

- Training your team to maintain and extend your container ecosystem

- Providing ongoing support and optimization

Our clients typically see 40% faster deployment cycles, 60% reduced infrastructure costs, and 99.9% improvement in deployment consistency after implementing our Docker solutions.

Docker Implementation Roadmap: 5 Steps to Containerization Success

If you’re interested in exploring how Docker can benefit your business, here are some practical next steps:

- Assess your current pain points in application deployment and infrastructure management

- Identify a pilot project – a non-critical application that would benefit from containerization

- Connect with experts (like our team at Bitcot) who can guide your Docker implementation

- Develop a migration plan that minimizes disruption to your current operations

- Measure the results to quantify the ROI of your Docker implementation

The right approach depends on your specific business context, but these steps provide a solid foundation for your containerization journey.

Ready to explore how Docker can transform your cloud infrastructure? Contact Bitcot’s cloud experts today for a personalized assessment and roadmap to containerization success.

FAQs

Is Docker the same as virtualization?

No. Traditional virtualization runs a complete operating system on top of a hypervisor for each virtual machine. Docker containers share the host’s OS kernel, making them much more lightweight and efficient than VMs. While a VM might be hundreds of MB or GB, Docker containers can be as small as a few MB.

Do I need special hardware to run Docker?

Docker runs on standard x86 hardware with most modern operating systems. The minimum requirements are relatively modest: a 64-bit CPU, virtualization support enabled in BIOS, and sufficient RAM and storage for your applications. Most business-grade servers and cloud instances easily meet these requirements.

How secure are Docker containers?

Docker provides several security features, including namespace isolation, control groups for resource limitation, and reduced privileges. However, container security requires proper configuration and maintenance. When implemented correctly, Docker can actually enhance your security posture by isolating applications and making security patches more manageable.

Can Docker replace my existing infrastructure?

Docker typically complements rather than replaces existing infrastructure. Many organizations adopt a hybrid approach, containerizing new applications while gradually migrating legacy systems as appropriate. The right strategy depends on your specific business needs and technical constraints.

Is Docker only for large enterprises?

Not at all. While enterprises have widely adopted Docker, it offers significant benefits for businesses of all sizes. Small and medium businesses often see even more dramatic ROI from Docker adoption due to their need to maximize resource efficiency and developer productivity.

How does Docker fit with Kubernetes?

Docker creates the containers, while Kubernetes orchestrates them at scale. Think of Docker as creating the standardized units, while Kubernetes manages where, when, and how many of those units run across your infrastructure. They’re complementary technologies that work together to provide a complete container management solution.