Key Takeaways

- AI readiness is a prerequisite, not a formality – skipping it is the leading cause of failed enterprise AI initiatives.

- Only 39% of companies using AI report measurable business impact; the gap is almost always infrastructure and governance, not model quality.

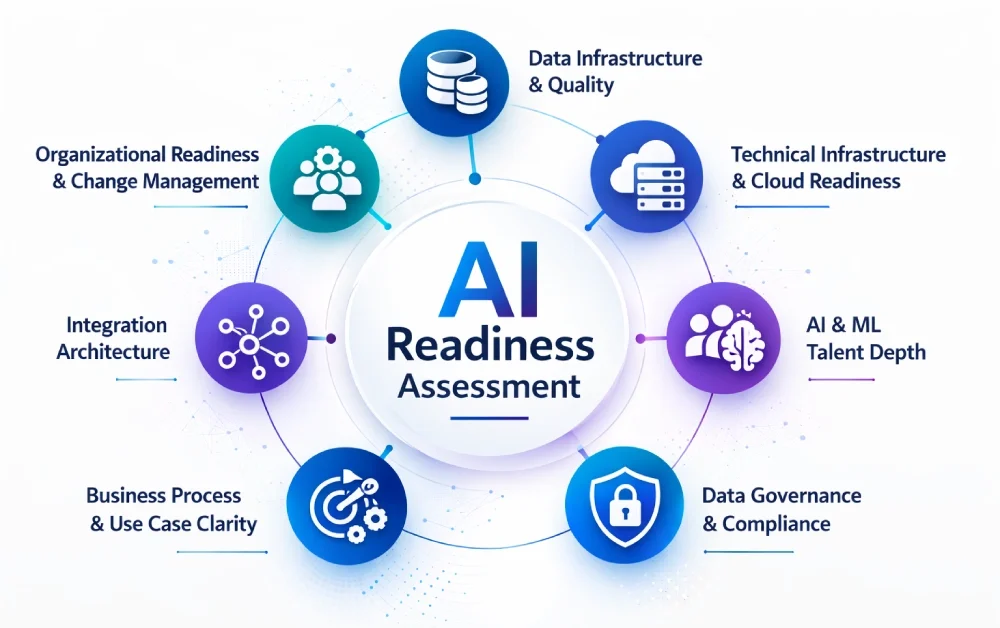

- A proper assessment covers seven dimensions: data, infrastructure, talent, governance, use case clarity, integration, and change management.

- Projects with formal data readiness assessments succeed at a 47% rate – versus just 14% without one.

- The readiness assessment creates the pre-AI benchmark without which ROI cannot be measured or defended.

- Most organizations are at Stage 1 or 2 on the maturity curve – the framework below tells you exactly what needs to change before you scale.

Most companies aren’t failing at AI because they chose the wrong model.

They’re failing because they started building before they knew what they were building on.

Here’s what that actually looks like in practice.

A Fortune 500 retailer burns $2.3M on an AI personalization engine. Eighteen months later, it’s offline. Why? Their customer data lived in four disconnected systems with no unified schema. The model had nothing clean to learn from.

A Series B SaaS company integrates a generative AI assistant into their product. Churn drops initially. Then hallucinations start appearing in customer-facing outputs. The support team is overwhelmed. The CTO is fielding calls from the board.

These aren’t edge cases. McKinsey’s State of AI 2025 report – surveying 1,993 executives across 105 countries – found that only 39% of organizations report any enterprise-level EBIT impact from AI, and nearly two-thirds remain stuck in the pilot or experimentation phase.

BCG’s Build for the Future 2025 global study of 1,250+ firms adds a sharper data point: 60% of companies report minimal revenue and cost gains despite substantial AI investment. For American businesses pouring billions into AI infrastructure, that gap is no longer acceptable.

The root cause is almost always the same: organizations are making implementation decisions before they’ve made readiness decisions. The common thread isn’t bad technology. It’s a skipped step: the AI readiness assessment.

If you’re a CTO, VP of Engineering, or product leader asking “is AI integration worth it without clean data?” or “how do we know if we’re actually ready to implement AI?” – this is the framework you need before writing a single line of ML code.

What Is an AI Readiness Assessment and Why Does It Matter in 2026

You are accountable for the outcome. That’s the reality of leading AI adoption inside a scaling organization.

An AI readiness assessment is a structured evaluation of your organization’s technical, operational, and strategic capacity to implement, scale, and govern AI systems effectively. It sits at the front end of any serious AI development services engagement – not as a formality, but as the diagnostic that determines whether your foundation can support what you’re about to build.

It is not a vendor questionnaire. It is not a checklist you hand to a junior analyst.

It is a diagnostic. Done correctly, it tells you exactly where your infrastructure will break under AI workloads, which datasets are too dirty to train on, what governance gaps will expose you to compliance risk, and whether your internal team has the skills to maintain what you’re about to build.

In 2026, the stakes are higher than ever. Generative AI, agentic AI workflows, and real-time ML inference are no longer experimental. They are production requirements in competitive SaaS, FinTech, healthcare, and eCommerce markets across the United States and beyond. Companies that skip the assessment phase are making a $500K bet on an unvalidated foundation.

The question isn’t whether to assess. The question is how thoroughly – and where to start.

Understanding AI Readiness Maturity Levels

Before diving into the seven assessment dimensions, it helps to know where your organization lands on the readiness spectrum.

Most enterprises don’t fall neatly into “ready” or “not ready.” They exist on a maturity curve. Understanding that curve is the starting point for any credible AI implementation strategy.

Infosys’s Enterprise AI Readiness Radar, which surveyed 1,500 companies globally, found that only about 15% of companies were confident that their AI projects had the foundational resources and policy alignment needed for successful execution.

North America tends to be slightly ahead of other regions on most AI adoption metrics – yet the value gap persists even here, confirming that adoption and readiness are not the same thing.

Think of readiness across four stages:

Stage 1 – Exploring: No formal AI strategy. Data is siloed. Leadership is interested but uncommitted. AI exists as a line item in the innovation budget, not in production.

Stage 2 – Experimenting: POCs are running. One or two use cases are in development. Data infrastructure is being assessed. Skills gaps are becoming visible.

Stage 3 – Scaling: At least one AI system is in production. Governance frameworks are forming. There is an executive sponsor with accountability and budget authority.

Stage 4 – Optimizing: AI is embedded across multiple business functions. Models are monitored, retrained, and improved continuously. ROI is measured and reported at the board level.

McKinsey’s 2025 research makes the infrastructure dependency clear: without reliable data, modern architecture, and governance controls in place, early AI agent deployments consistently hit performance and trust barriers – regardless of how sophisticated the model itself is.

Knowing your maturity stage shapes every subsequent decision – which use cases to prioritize, how to staff the initiative, and whether to build, buy, or partner. For organizations actively pursuing AI transformation, this staging framework is also what separates a phased, compounding strategy from a scattered, high-burn experiment.

Most organizations reading this are at Stage 1 or 2. The seven dimensions below tell you exactly what needs to change before you move to Stage 3. Work through each one honestly – the gaps you find here are the ones that determine whether your AI initiative compounds or collapses.

The 7 Core Dimensions Every AI Readiness Assessment Must Cover

1. Data Infrastructure and Quality

This is where most AI initiatives die quietly.

AI models are only as good as the data they consume. Before any architecture conversation, you need a clear picture of your current data state. Weak data pipeline architecture is the single most common root cause of failed AI deployments – more than budget overruns, skills gaps, or model selection errors combined. Deloitte’s 2025 AI adoption research confirms this: data quality issues were cited as the top reason for AI project abandonment in 38% of cases tracked across US and global enterprises.

Ask your team these questions:

- Where does your operational data live? (CRM, ERP, data warehouse, raw S3 buckets?)

- Is your data labeled, versioned, and schema-consistent?

- What percentage of your historical records are complete?

- Do you have PII scattered across unstructured data stores?

- Can you trace data lineage from ingestion to output?

The hard truth: if you’re running Salesforce, a legacy ERP, and three SaaS tools with no centralized data layer, you don’t have an AI problem yet. You have a data architecture problem. Fix that first.

Actionable tip: Run a data completeness audit across your top three operational systems before any AI vendor conversation. If completeness falls below 85% on your primary dataset, prioritize a data remediation sprint before model scoping begins.

Organizations that invest in AI-powered data pipelines before training their first model typically move from POC to production 3x faster than those that don’t. That’s not a soft claim. That’s an architecture reality.

Data is the foundation. But a clean foundation means nothing if the infrastructure sitting on top of it can’t handle the load.

2. Technical Infrastructure and Cloud Readiness

So your data is clean. Can your infrastructure actually serve a model at production scale?

Running large language models or real-time inference pipelines requires machine learning infrastructure that most on-premise and early-cloud setups weren’t designed to handle. This is the gap that turns a successful POC into a failed production deployment.

Key evaluation areas:

- Are your compute environments GPU-accessible when needed?

- Do you have auto-scaling configured at the container or function layer?

- Are you on a modern cloud-native stack (AWS, GCP, Azure) or still managing bare-metal servers?

- What does your current CI/CD pipeline look like? Can it support model versioning and rollback?

- Is your API layer capable of handling inference latency requirements (typically sub-200ms for real-time applications)?

If you’re still asking “how do I scale a Node.js API on AWS to support ML inference?” – that’s an infrastructure gap that surfaces during assessment, not after a failed deployment.

Actionable tip: Run a load simulation against your current API layer at 3x your expected inference volume. If response times degrade past 500ms, you have a cloud architecture gap to resolve before any model goes near production.

US healthcare platforms have an added layer here. HIPAA-compliant architectures for generative AI integration require private endpoints, audit logging, and encryption at rest and in transit – all before any model touches patient data.

Infrastructure answers the question of “can we run it.” But dimension three answers a harder question: who maintains it once it’s running?

3. AI and ML Talent Depth

This is the gap nobody wants to discuss in board meetings.

There is a significant difference between a team that uses AI tools and a team that can build, monitor, and iterate on AI systems in production. The distinction matters enormously – because the failure mode for each is completely different.

Your assessment should map:

- Do you have ML engineers, or only software engineers who’ve completed a few Coursera courses?

- Is there a dedicated data scientist role, or is that work absorbed by whoever has bandwidth?

- Who owns model monitoring post-deployment?

- Does your team understand bias detection, model drift, and retraining triggers?

- Can your DevOps team support MLOps workflows?

If the honest answer is “we’re mostly full-stack engineers who are excited about AI” – that’s a valid starting point. But it shapes which implementation path makes sense: build internally, partner with a senior engineering team, or adopt a managed AI platform with guardrails.

Actionable tip: Map your current team against three roles: model builder, model evaluator, and model operator. If any of the three is unassigned or informal, you have a talent structure gap that will surface as a production reliability problem within 90 days of launch.

Skipping this assessment and staffing a team incorrectly leads to deployment delays, model decay, and ultimately, a failed initiative that erodes internal confidence in enterprise AI adoption for the next 18 months. Deloitte’s State of AI in the Enterprise report – based on a survey of 3,235 business and IT leaders across 24 countries conducted in 2025 – identified the AI skills gap as the single biggest barrier to integration across organizations globally, including those in the US market.

Talent gaps are fixable. But governance gaps? Those carry legal exposure. That’s dimension four.

Also Read: Top 10 AI Consulting Companies in the USA: A List of Leading Firms

4. Data Governance and Compliance Posture

Compliance isn’t a legal team problem. In 2026, it’s an architecture problem.

The US AI regulatory landscape has shifted significantly. Over 1,208 AI-related bills were introduced across all 50 states in 2025, with 145 enacted into law. California and Texas laws took effect January 1, 2026. Colorado’s comprehensive AI Act takes effect June 30, 2026. Illinois has regulated AI in employment decisions since 2024.

The United States now has a fast-moving, state-by-state compliance environment that makes AI governance a direct operational requirement – not a future consideration. Healthcare and FinTech industries face additional scrutiny for algorithmic decision-making, and any AI system affecting users in credit scoring, medical triage, HR screening, or pricing is subject to audit requirements.

Your readiness assessment must evaluate:

- Do you have a data governance policy that covers AI-specific use cases?

- Are you documenting model inputs, outputs, and training data sources?

- How are you handling consent for data used in model training?

- Is there an established model risk management (MRM) framework?

- Have you mapped which AI outputs trigger regulatory disclosure requirements?

“The enterprises succeeding with AI in 2026 aren’t just moving fast – they’re building with controls. Governance isn’t a constraint on AI velocity. It’s what makes velocity sustainable.”

Actionable tip: Before your next AI build, create a one-page model card for your intended use case – documenting training data sources, intended outputs, known limitations, and the human review trigger points. If you can’t fill it out, your governance posture isn’t ready for production.

Responsible AI deployment isn’t a principles document you publish on your website. It’s a set of operational controls embedded in the way your models are built, monitored, and audited. For PE-backed companies and those approaching Series C and beyond, this is no longer optional. Investors are asking about AI governance in due diligence. It’s part of the risk profile now.

Governance protects you from risk. But risk without a defined use case is still risk without a return. That’s where dimension five comes in.

Also Read: AI Automation in Healthcare: Benefits, Use Cases & Implementation Guide

5. Business Process and Use Case Clarity

“We want to use AI” is not a use case. And it’s not a strategy either.

One of the most common findings in readiness assessments is that organizations have AI ambition without use case specificity. The insight here is important: vague use cases don’t just waste budget – they make it impossible to define success, which means the initiative can never be declared a win or a loss. It simply drains resources indefinitely.

Before committing budget and engineering cycles, leadership needs to be aligned on:

- Which specific business process are you targeting with AI?

- What is the measurable outcome (cost reduction, conversion lift, customer retention, risk reduction)?

- What does the baseline metric look like today?

- Who owns the outcome after deployment?

- Is this a one-time implementation or an ongoing ML system requiring maintenance?

The best AI implementations in 2026 are narrowly scoped, deeply integrated into existing workflows, and owned by a business stakeholder who can articulate ROI. The worst ones are broad, disconnected from existing systems, and owned by “the AI team” with no clear accountability.

Actionable tip: Before scoping any AI initiative, define a single-sentence success metric in this format: “We will know this AI implementation succeeded when [metric] improves from [baseline] to [target] within [timeframe].” If your team can’t write that sentence, the use case isn’t ready.

When evaluating use cases, prioritize high-frequency decisions with large data volumes. Recommendation engines, fraud detection, dynamic pricing, predictive maintenance, and intelligent document processing are high-ROI candidates. “AI-generated internal newsletters” is not.

A sharp use case tells you what to build. But it doesn’t tell you what it plugs into. That’s the integration question.

6. Integration Architecture and System Complexity

AI doesn’t operate in a vacuum. It has to plug into the systems you already run – and that’s where timelines quietly double.

Complex enterprise architectures – ERP systems like SAP or Oracle, legacy CRM platforms, custom middleware, multi-cloud environments – create integration surface area that directly affects AI implementation timelines and costs. The insight most organizations miss: integration complexity is almost always underestimated, and it almost always determines whether a project ships on time.

Your assessment should map:

- What does your current integration layer look like? (REST APIs, event streams, message queues, point-to-point?)

- Are your core systems API-accessible, or do they require batch exports and imports?

- Do you have a data warehouse or data lakehouse that AI systems can query in near real-time?

- How are system-of-record changes propagated across your stack?

- Have you dealt with vendor lock-in risk in your current architecture?

ERP integration complexity alone has caused 12-month delays in AI deployments for mid-market manufacturers. That’s 12 months of competitive disadvantage. Knowing this before you start isn’t caution – it’s strategic intelligence.

Actionable tip: Document the API accessibility of your top five data-producing systems. For any system that requires batch export rather than real-time API access, flag it as a critical path dependency in your AI project plan – and budget at least 6 to 10 weeks to resolve it before model development begins.

You can solve every technical dimension and still watch an AI initiative fail. Here’s why.

7. Organizational Readiness and Change Management

This one is frequently dismissed. It shouldn’t be.

AI systems change how people work. They shift decision authority. They surface insights that contradict institutional intuition. And they require ongoing human oversight to function responsibly.

Assess:

- Does leadership have a clear, communicated AI strategy?

- Are functional team leaders involved in defining AI use cases, or is this a top-down mandate?

- Is there a change management plan for roles that AI will augment?

- Do you have a feedback loop for humans to flag incorrect AI outputs?

- Is there an executive sponsor with budget authority and accountability?

Actionable tip: Run a simple stakeholder alignment check before your AI project kickoff. Ask three questions to five key stakeholders: What problem are we solving? How will we measure success? What changes about your day-to-day? If you get five different answers, your organizational readiness gap is wider than your technical gap.

Companies that treat AI as a technology project instead of a digital transformation initiative consistently underperform. McKinsey’s 2025 data confirms this directly: American organizations that treat AI as a business transformation rather than an IT project achieve a 61% success rate – versus just 18% for those that don’t.

Deloitte’s 2026 report reinforces the same finding: only 34% of companies surveyed say they are using AI to deeply transform the business, while the majority are still applying it as a point tool. The model is never the bottleneck. The humans are.

Seven dimensions assessed. Now comes the structural decision most organizations skip entirely – and the one that determines whether your AI program scales or stalls.

Also Read: Top 7 AI Trends Changing the Future of Enterprise Strategy

Building Your AI Governance Structure Before You Scale

One dimension that organizations routinely underinvest in at the readiness stage is governance infrastructure – not just policy documents, but an operational AI governance framework that can manage risk in real time.

Infosys recommends establishing a centralized AI team to safeguard technical, legal, ethical, and reputational risks – a function some enterprises are formalizing as an AI Value Office. This isn’t a committee that meets quarterly. It’s an operational unit responsible for use case prioritization, model risk oversight, regulatory monitoring, and responsible AI deployment practices across the organization.

For US enterprises operating at scale, the AI Value Office typically sits at the intersection of engineering, legal, and operations. Its mandate covers: greenlighting AI initiatives based on risk-adjusted ROI, maintaining a model registry with audit trails, monitoring regulatory developments at the state and federal level, and establishing feedback mechanisms that surface failure modes before they reach users.

This structure doesn’t need to be large. In many mid-market organizations, it starts as a three-person working group with defined charters and escalation paths. The point is that it exists before the first model goes to production – not after a governance incident forces the conversation.

With governance in place, the ROI conversation becomes much more concrete. Here’s how to run it.

How to Calculate the ROI of Your AI Readiness Investment

A frequent question from CFOs and operations leaders: “How do we justify the cost of an assessment before we’ve built anything?”

The answer starts with a straightforward formula. ROI = (Net Benefits / Total Costs) x 100.

The net benefits of a proper readiness assessment include: avoided rework costs from building on a flawed foundation (typically $200K to $800K), reduced time-to-production through sequenced infrastructure investment, lower compliance remediation costs, and faster stakeholder alignment on use case prioritization.

The data makes the case clearly. According to analysis of 2,400+ enterprise AI initiatives tracked through 2025, projects that conducted a formal data readiness assessment before building achieved a 47% success rate – compared to just 14% for those that skipped it. That delta alone represents tens of millions in preserved budget for a mid-market organization running multiple AI workstreams.

Executives should also be tracking financial impact (revenue growth and cost reduction), customer experience metrics like satisfaction and retention, innovation metrics including time to market and market share, user adoption rates, and model performance – all of which require a baseline that only a structured assessment can establish.

You cannot measure AI ROI without a pre-AI benchmark. The readiness assessment creates that benchmark.

If you want to understand how this maps to a real implementation timeline, the phased approach below shows exactly what sequenced execution looks like.

From Assessment to Execution: The Phased Implementation Approach

Completing an assessment doesn’t mean you build everything at once. The most resilient AI implementations follow a phased approach that generates early wins while building toward systemic capability.

Phase 1: Foundation. Address the critical blockers identified in the assessment – data pipeline gaps, infrastructure provisioning, governance framework setup. No model training yet.

Phase 2: Pilot. Select one high-confidence, high-ROI use case. Build a scoped POC with clean data, a defined success metric, and a human review loop. Measure against baseline.

Phase 3: Harden and Expand. Take the pilot to production. Implement monitoring, retraining triggers, and model documentation. Begin evaluating the second use case based on learnings from the first.

Phase 4: Scale and Optimize. AI is now embedded in a production workflow with measurable business impact. The governance structure is operational. New use cases are evaluated through the AI Value Office framework.

This sequence prevents the most common failure pattern: enterprises that build ambitious AI systems on unresolved infrastructure gaps, then spend the following year firefighting instead of scaling. Deloitte’s 2025 research found that the median time to AI project abandonment was 11 months – meaning most US organizations persist far too long before acknowledging that a flawed foundation can’t be patched mid-flight.

Also Read: AI Agent Development Services: Build Custom AI Agents

The Real Cost of Deploying AI Without a Readiness Assessment

Now let’s put a number on what skipping the assessment actually costs.

| Skipped Assessment Area | Downstream Cost |

| Data quality not evaluated | 6 to 18 months of data remediation; $200K to $800K in rework |

| Infrastructure gaps missed | Production failures, SLA breaches; $50K to $300K in emergency re-architecture |

| Compliance posture ignored | Regulatory exposure; potential fines + $500K+ in retroactive governance buildout |

| Talent gaps unaddressed | 30 to 50% higher turnover; delayed delivery by 9 to 12 months |

| Use case ambiguity | Misaligned builds; $150K to $1M+ in sunk cost on low-ROI implementations |

| Integration complexity underestimated | 2 to 4x timeline overrun; budget exhaustion before go-live |

| Governance structure absent | Post-deployment incidents; legal exposure + reputational damage |

These aren’t theoretical scenarios. In 2025, large enterprises abandoned an average of 2.3 AI initiatives each, with average sunk costs of $4.2M per abandoned project – figures drawn from Deloitte’s 2025 AI adoption research and synthesis of 2,400+ tracked initiatives. For American companies running multiple parallel AI workstreams, the compounded exposure is significant.

The readiness assessment itself, done right by senior practitioners, typically costs a fraction of one of those line items.

AI-Ready vs AI-Aspiring: Where Does Your Organization Stand?

You’ve worked through all seven dimensions. Now it’s time to place yourself honestly.

| Dimension | AI-Aspiring | AI-Ready |

| Data Infrastructure | Siloed, inconsistent schemas | Unified, versioned, auditable |

| Compute Environment | On-prem or early cloud | Cloud-native, GPU-accessible on demand |

| ML Talent | Software engineers curious about AI | Dedicated ML engineers + MLOps capability |

| Governance | No formal policy | Documented MRM framework, compliance mapping |

| Use Case Clarity | “We want to use AI somewhere” | Specific, scoped, outcome-driven |

| Integration Layer | Point-to-point, batch-heavy | API-first, event-driven, real-time capable |

| Change Management | Not discussed | Executive-sponsored, cross-functional |

| Governance Structure | No centralized AI oversight | AI Value Office with defined mandate |

Most organizations arriving at this decision land somewhere in the middle. The assessment tells you exactly which quadrant you’re operating in and what the prioritized path forward looks like.

If you’re seeing more AI-Aspiring columns than AI-Ready, you’re not behind – you’re exactly where this assessment is designed to help. The next step is working with a team that has navigated this transition across dozens of enterprise environments.

How Bitcot Supports AI Readiness Assessment and Implementation

Most vendors will sell you an AI roadmap. We build you an honest one.

We’ve seen what happens when organizations rush to build AI on top of brittle infrastructure. We’ve also seen what becomes possible when the foundation is right.

“Every week we talk to technical leaders who are excited about AI but haven’t yet asked the one question that determines whether their investment will compound or collapse: is our foundation ready? That question – answered honestly – is where the real work begins.”

– Raj Sanghvi, Founder and CEO, Bitcot

At Bitcot, our AI readiness engagements start with a structured discovery workshop that maps your current state across all seven dimensions covered above. We don’t arrive with assumptions. We arrive with questions, senior practitioners, and a diagnostic framework that surfaces the real blockers before they become production disasters.

Our architecture validation process gives your technical leadership an honest assessment of infrastructure gaps, integration complexity, and the most viable path to production-ready AI systems. We identify quick wins alongside long-horizon investment areas, so you can sequence implementation in a way that generates ROI without burning runway.

Our teams are senior-only. Every engagement is led by engineers who have built and shipped AI systems across industries – from HIPAA-compliant US healthcare platforms and fintech automation to SaaS infrastructure and marketplace intelligence. You can see examples of that work in our case studies.

We also bring governance frameworks that travel well. Whether you’re scaling a FinTech platform under SEC oversight, a healthcare application under HIPAA, or a SaaS product navigating the emerging US AI regulatory landscape, compliance is embedded into the architecture from day one – not retrofitted after an audit flags the exposure.

For organizations with internal engineering teams, we work alongside your people. We don’t displace them. We accelerate them.

If any of the seven dimensions above flagged a gap in your current setup, that’s exactly where our assessment begins. You don’t need to have everything figured out – you just need to start with an honest picture.

The Companies That Win With AI Are the Ones That Prepare

The AI leaders in your market aren’t moving faster. They’re moving smarter.

They invested in the foundation before the feature. They did the unglamorous work of data architecture, governance, and infrastructure readiness before a single model was trained. And now they’re deploying AI capabilities that compound – each system feeding the next, each dataset growing more valuable with use.

The companies that are stalling, pivoting, or quietly decommissioning AI initiatives are the ones that skipped that work. They chased the announcement, not the architecture. They funded the model, not the infrastructure to sustain it.

You have two options. Launch without a readiness baseline and spend the next 18 months discovering your gaps through failure. Or invest 4 to 6 weeks in an honest assessment that tells you exactly what you’re working with, what you need to build, and what sequence of investments will generate the fastest and most defensible ROI.

The second path is not slower. It’s the only one that actually arrives.

If you’re a CTO, VP of Engineering, or technical founder preparing to make a serious AI investment, let’s start with the foundation.

Request an AI Readiness Assessment with Bitcot’s senior architecture team – and get a clear, honest picture of where you stand before you commit the budget.

Frequently Asked Questions (FAQs)

How long does a proper AI readiness assessment take?

For a mid-market organization with moderate architectural complexity, a thorough assessment typically requires 3 to 6 weeks. This includes discovery workshops, technical architecture review, data audits, talent mapping, and a documented readiness report with prioritized recommendations. Compressing this timeline is possible but increases the risk of missing critical gaps.

What is the TCO of building AI capabilities versus buying a pre-built AI platform?

Build vs buy is one of the most consequential decisions in your AI implementation strategy. Over a 3 to 5 year horizon, custom AI systems built on your proprietary data typically deliver higher ROI for core competitive workflows – but require a 12 to 18 month runway before meaningful returns. Pre-built platforms offer faster time-to-value but often introduce vendor lock-in, data portability constraints, and limitations on model customization. The readiness assessment should clarify which path suits each use case.

Can we run an AI readiness assessment with our internal team?

You can run a partial assessment internally. Most organizations can evaluate their data infrastructure and talent gaps without external help. However, architecture validation, governance gap analysis, and integration complexity mapping benefit significantly from external practitioners who have seen the patterns across dozens of deployments. Blind spots are, by definition, what you don’t see.

How do we prevent vendor lock-in in our AI architecture?

Design for portability from the start. This means using cloud-agnostic ML orchestration tools where possible (MLflow, Kubeflow), maintaining model artifacts in your own storage layer, and ensuring your training data pipelines are not exclusively tied to a single cloud provider’s proprietary services. The assessment should flag every point of lock-in exposure so you can make deliberate tradeoffs rather than accidental ones.

What AI use cases have the highest ROI for US SaaS and FinTech companies in 2026?

Based on production deployments across these verticals, the highest-ROI use cases are: intelligent churn prediction integrated into product workflows, AI-assisted fraud detection with human review loops, dynamic pricing engines with real-time signal ingestion, and LLM-powered customer support with supervised escalation paths. The unifying characteristic is narrow scope, high decision frequency, and measurable baseline metrics.

What does "AI without clean data" actually cost us?

More than the AI build itself. A model trained on dirty data requires 3 to 5x the retraining cycles to reach acceptable accuracy. Worse, it ships with hidden failure modes that surface under edge case conditions in production. The cost includes engineering time lost to debugging, customer-facing errors, and the compounding reputational impact of an AI system that users learn not to trust. Data remediation before training is always cheaper than remediation after deployment.

How do we know when we're truly ready to move from assessment to implementation?

You’re ready to move to implementation when you have: a clean, accessible dataset for your target use case, an infrastructure layer capable of handling inference at required latency, an AI governance framework that covers model risk and compliance exposure, a defined success metric with a baseline, and an executive sponsor with clear accountability. These aren’t aspirational checkboxes. They’re binary gates.