A user opens your health app at 2 a.m. with chest tightness. They type a question. The chatbot gives a generic response, then drops them at a dead end. No follow-up. No doctor. No way to share the lab report sitting on their nightstand.

They close the app and go back to Google.

That’s the reality of most healthcare chatbots on the market right now. They answer questions, but they don’t solve the problem. And when a health app fails a user mid-anxiety, they don’t come back.

The market doesn’t have a technology problem. It has a trust problem. According to an MGMA poll from April 2025, only 19% of medical practices in the United States use any form of chatbot for patient communication.

Meanwhile, the global healthcare chatbots market stood at $1.98 billion in 2025, growing to $2.41 billion in 2026 and projected to reach $12.63 billion by 2034 (Fortune Business Insights), and the broader conversational AI in healthcare market was valued at $13.68 billion in 2024 (Grand View Research).

The money is flowing. The adoption isn’t. That gap is the opportunity.

We built ChatbotAI to close it. It’s a fully native Android AI health assistant where users can describe symptoms in text, speak them aloud, upload a photo of a prescription or skin condition, get a structured medical analysis, and book a doctor, all without ever leaving a single chat thread.

No screen-hopping. No portal redirects. No blank screens when the network drops.

This case study breaks down exactly how we built it, the architecture decisions behind it, and the one pattern most teams miss when building medical AI products. We’ll get to that.

Why Most Healthcare Chatbots Fail to Retain Users

Before we get into the build itself, it’s worth understanding why so many healthcare chatbot products underperform despite the massive market tailwind.

The problem isn’t the AI model. It’s the experience around it.

Most health chatbots treat the conversation as a standalone feature, disconnected from any meaningful next step. A user describes a symptom, gets a generic response, and then is left wondering what to do next. There’s no path to a doctor. No way to share a lab report. No fallback when the connection drops mid-conversation.

That means more than 80% of practices still rely on phone calls, portals, and in-person visits for routine patient communication. That’s not because the technology isn’t ready. It’s because the products built so far haven’t earned trust.

What users actually need isn’t a chatbot. They need a health companion that can see, listen, respond intelligently, and connect them to real care, all without ever breaking the conversational flow.

That’s exactly what we set out to prove with this build.

What an AI-Powered Symptom Checker App Needs to Get Right

So what does a AI-powered virtual health assistant need to actually do well? The answer isn’t more features. It’s fewer seams.

ChatbotAI is a production-grade Android health assistant built in Kotlin with Jetpack Compose. It orchestrates the full health consultation journey: symptom check-in, AI-powered medical analysis, prescription and lab report image interpretation, voice input, and doctor discovery with appointment booking.

All of it happens inside a single chat interface, available 24/7.

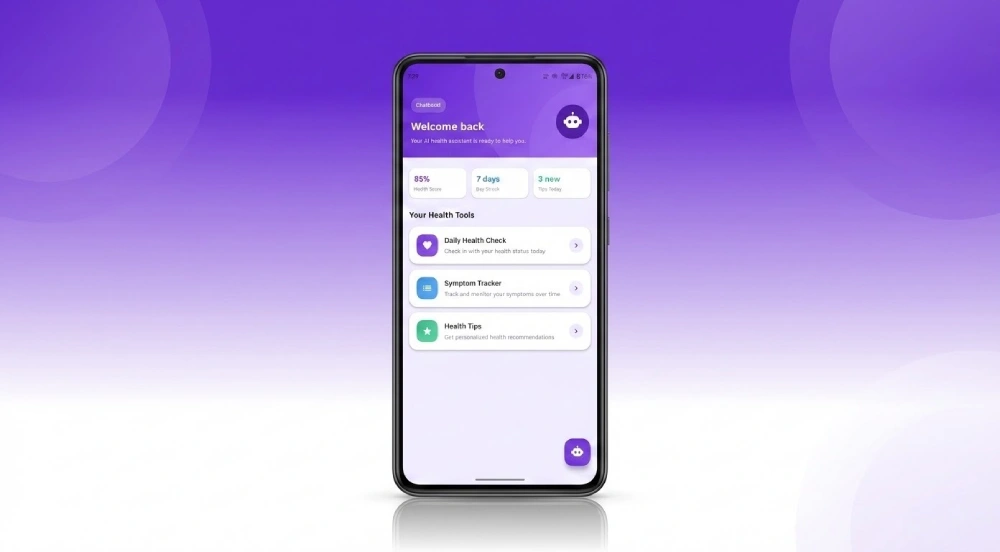

The experience starts before the first message. The home screen displays a personal health dashboard showing the user’s Health Score, Day Streak, and new health tips, alongside quick-access tools for Daily Health Check, Symptom Tracker, and Health Tips. A floating bot icon launches the AI assistant.

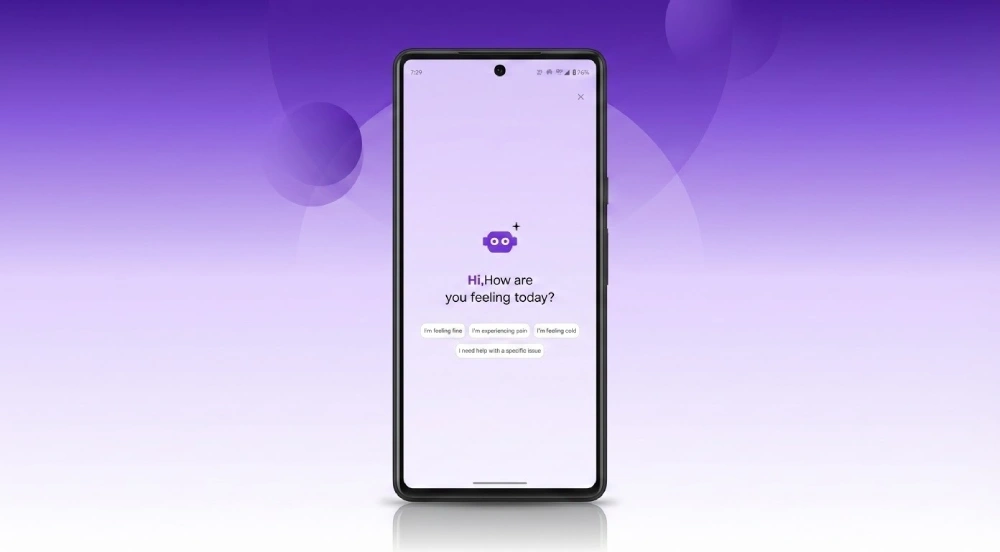

When a user opens the chatbot, they see a greeting phase with four pre-defined sentiment chips: “I’m feeling fine,” “I’m experiencing pain,” “I’m feeling cold,” and “I need help with a specific issue.” Tapping a chip immediately sends it as a message and transitions the UI into the full conversation phase. No blank screens. No onboarding friction.

Why does this matter? Because fragmentation kills engagement. When a user has to leave the chat to upload an image, switch screens to find a doctor, or re-enter symptoms after a network timeout, the experience fails.

We designed ChatbotAI to eliminate those fractures. Every interaction, whether text, voice, or image, feeds into one continuous conversation. And every AI response leads somewhere, whether that’s a follow-up question, a suggested next step, or an inline doctor recommendation.

For American healthcare companies evaluating AI integration, this is what “AI-native” means in practice. It’s not a chatbot bolted onto a portal. It’s an AI-first experience where the conversation is the product.

Why AI Health Assistants Matter Right Now for US Healthcare

The timing of this build isn’t accidental. Across America, digital health adoption is hitting an inflection point, and the gap between what patients expect and what most apps deliver is growing fast.

According to Grand View Research, the global conversational AI in healthcare market is projected to reach $106.67 billion by 2033, growing at a 25.71% CAGR. In the US alone, projected growth for conversational AI in healthcare is approximately 21.9% CAGR between 2025 and 2035.

That growth isn’t just about hospitals buying enterprise platforms. It’s about the millions of individual users who want instant, reliable health guidance on their phones without sitting in a waiting room or scrolling through conflicting search results.

The companies that build this right will own a market that’s expanding faster than almost any other category in health tech. The ones that ship fragile, disconnected chatbots will lose users on the first session.

That distinction comes down to architecture. And it’s the reason we approached this build the way we did.

How We Solved Four Critical Challenges in Medical AI App Development

The previous sections paint the picture of what’s needed. Now let’s look at how we delivered it. Every proof of concept exists to prove that hard problems can be solved. Here are the four challenges that shaped this build.

Building a Medically Reliable Conversational AI

The first challenge was accuracy. A health assistant can’t hallucinate, drift off-topic, or provide vague responses when a user is describing chest pain or a skin condition.

We solved this by integrating the Groq AI API with the llama-3.1-8b-instant model, tuned to a temperature of 0.4. A lower temperature produces more deterministic, grounded responses, which is critical in a medical context where creativity isn’t the goal. Reliability is.

Every user message is appended to a rolling conversation history and submitted alongside a system prompt and local context injection. The AI doesn’t start fresh with each message. It remembers what the user said three messages ago.

Handling Multimodal Inputs Without Breaking the Flow

Users needed to describe symptoms in text, speak them aloud, or upload images of prescriptions, lab reports, and visible skin conditions like burns, rashes, and lesions. That required three separate input pipelines converging into one conversation thread.

For voice, we used Android’s native SpeechRecognizer API with partial results enabled, streaming live transcriptions directly into the text field.

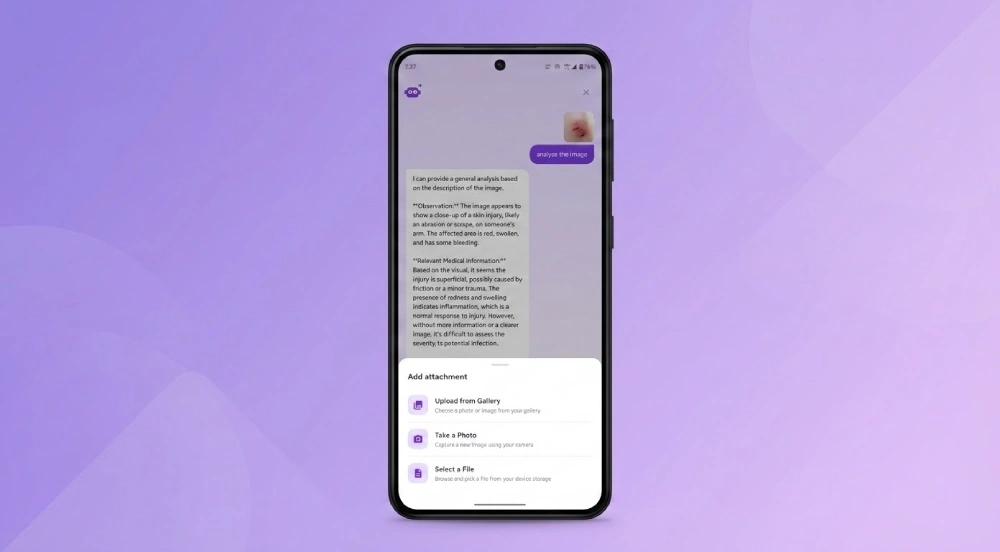

For images, we built a multi-attachment system supporting gallery, camera, and file picker, accepting up to five images per message. Users tap the + button to open an attachment bottom sheet with three clearly labeled options: “Upload from Gallery,” “Take a Photo,” and “Select a File.”

Each image is base64-encoded and submitted to the meta-llama/llama-4-scout-17b-16e-instruct vision model for structured analysis.

Here’s the architectural decision that most multimodal implementations get wrong. We isolated the vision analysis code path (sendMessageWithImage) from the standard chat history. This prevents image context from polluting the text-based conversation.

Skip this step and your chat thread turns into a jumbled mess of text and vision responses within a few exchanges.

Guaranteeing Offline Resilience in a Health App

A health app can’t show a blank screen when a user is anxious about a symptom. That’s a trust-breaking moment.

We solved offline resilience by bundling a local medical knowledge base (medical_data.json) covering 15+ medical categories directly into the app’s assets. Before any API call, the app checks the user’s message against 100+ medical keywords.

If the query matches a common condition like fever, headache, or fatigue, the response is served locally in milliseconds.

This keyword pre-filter reduces unnecessary API calls by an estimated 40-60% for everyday symptom queries, cutting both latency and cost. Only novel or complex queries route to the Groq API.

Connecting AI Symptom Analysis to Doctor Appointment Booking

The last mile problem in health chatbots is the handoff. A user gets an AI assessment, but then what?

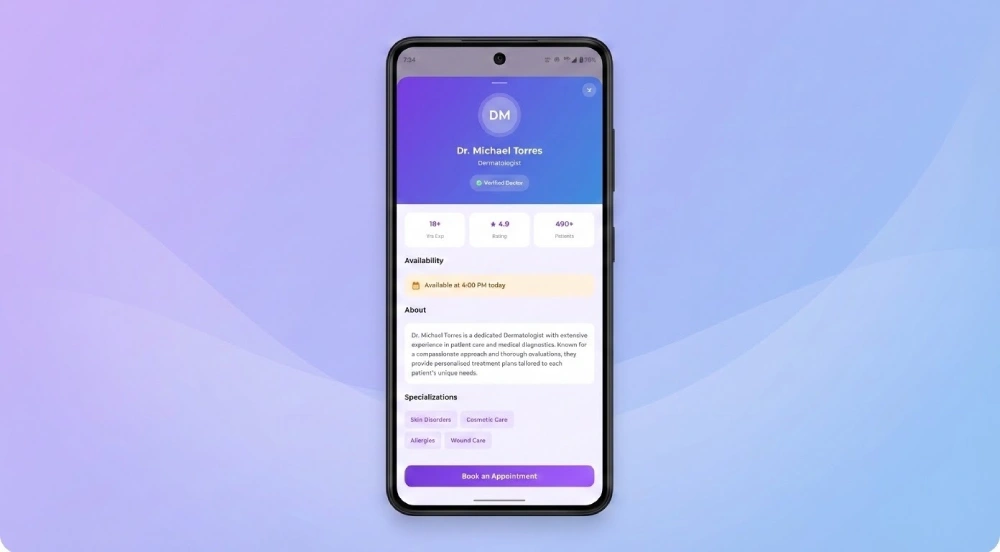

We integrated inline Doctor Info Cards directly into the chat thread. When the AI determines a physical consultation is warranted, for example a suspected second-degree burn, the ViewModel injects a DoctorInfo message.

The user sees a card with the physician’s name, specialty, verification badge, experience, rating, patient count, and a “Book an Appointment” button. All without leaving the conversation. Tapping the card opens a full DoctorDetailSheet with the physician’s complete profile, specializations, availability, and booking action.

This transforms the chat from a dead-end Q&A into an actionable care pathway.

Clean Architecture for a Scalable Healthcare AI Android App

Solving the right problems is only half the work. If the architecture can’t support the product at scale, none of it matters. That’s where most health app prototypes fall apart. They work in a demo but crumble under real conditions.

Here’s how we built ChatbotAI to scale.

MVVM and Clean Architecture for Long-Term Maintainability

The app follows a strict MVVM + Clean Architecture pattern with three distinct layers.

The data layer includes the Groq API client (handling both text chat and vision analysis) and a StaticProjectDataSource backed by the local JSON knowledge base.

The presentation layer uses Jetpack Compose screens for the splash, home, and chatbot experiences.

The ViewModel (ChatbotViewModel, 642 lines) serves as the authoritative state machine for the entire chat experience. All UI state, including conversation phase, message list, attachment URIs, suggestion chips, typing indicator, and voice listening state, is exposed as StateFlow properties.

Why does this matter for scaling? Because the Compose UI is purely reactive and completely free of business logic. An engineer can swap the AI provider, add a new input modality, or redesign the chat UI without touching the other layers.

A Hybrid AI Engine That Balances Speed and Intelligence

Not every medical question needs a round trip to a cloud API. Our two-layer response strategy works like this:

Layer 1: Local JSON pre-filter. The app checks the user’s query against categorized medical keywords. If a match is found, the response is served from the bundled knowledge base in milliseconds. No network call. No latency. No cost.

Layer 2: Groq API. For queries that require nuanced, multi-turn reasoning or vision analysis, the request routes to the Groq API with full conversation context.

This hybrid approach gives the app the responsiveness of a local tool and the intelligence of a cloud-powered LLM, without forcing a tradeoff between the two.

Key Features That Make This AI Health Assistant Stand Out

The architecture handles scale. But what does the user actually experience? Here’s what makes ChatbotAI feel different from a typical medical chatbot.

AI-Powered Vision Analysis for Prescriptions and Skin Conditions

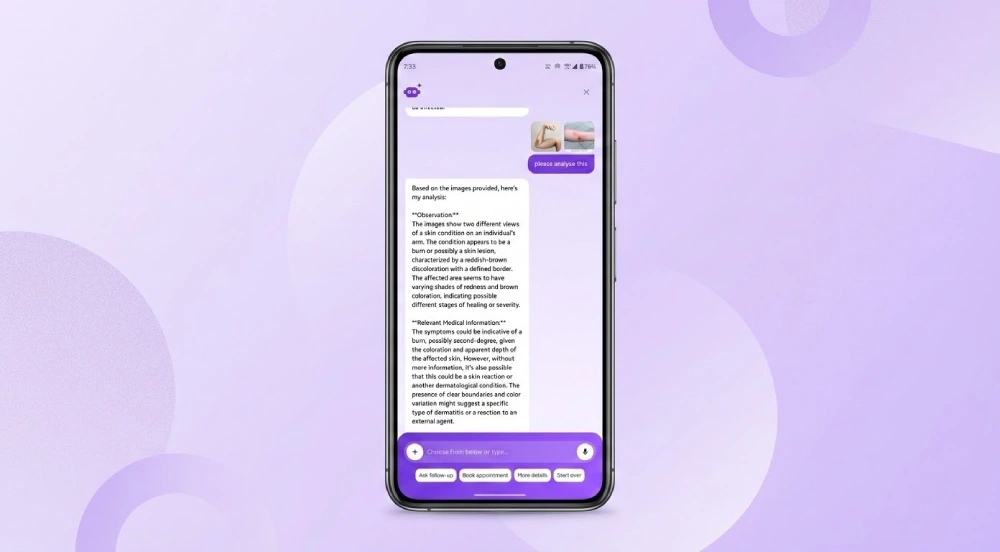

Users can upload images of prescriptions, lab reports, or visible skin conditions. The llama-4-scout-17b vision model returns a structured response with two sections: Observation (what the model sees) and Relevant Medical Information (clinical context).

Thumbnails of uploaded images appear inline in the user’s message bubble, giving visual confirmation before the AI responds. This isn’t a generic image classifier. It’s a medical context engine that interprets visual inputs the way a first-pass triage would.

Because users can attach up to five images at once, they can share multiple angles of a skin condition or multiple pages of a lab report in a single message. This gives the AI richer context for analysis and results in higher diagnostic relevance compared to single-image chatbots.

Voice-to-Text for Hands-Free Symptom Reporting

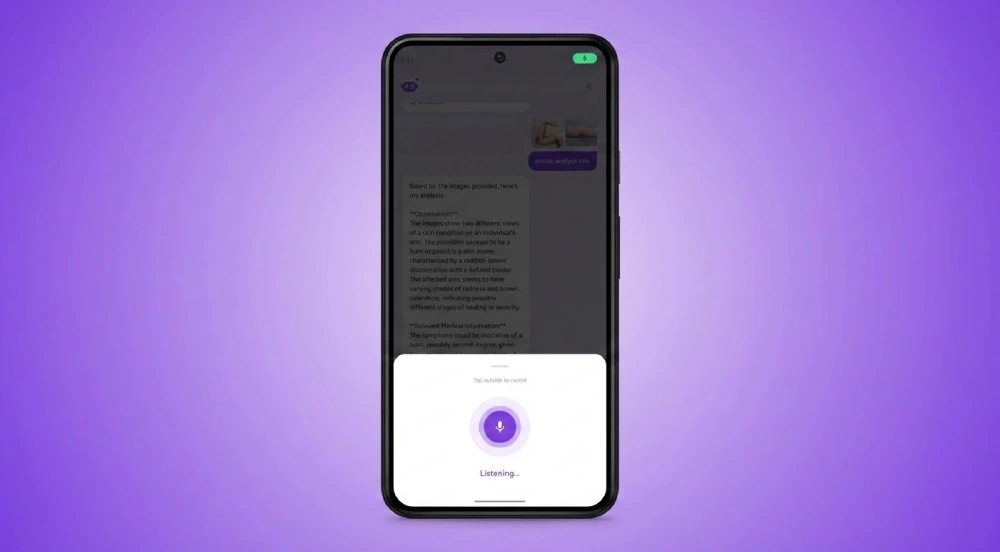

Not everyone can type when they’re feeling unwell. We integrated Android’s native SpeechRecognizer with a pulsing ring animation and real-time partial transcription. Users see their words appear in the text field as they speak.

This is especially important for accessibility. Users with mobility limitations or visual impairments can engage fully with the health assistant without touching the keyboard.

Smart Suggestion Chips That Guide the Patient Journey

After every AI response, the model appends 3-4 contextual follow-up suggestions. These are parsed and displayed as animated chips (capped at two rows) below the conversation.

Instead of forcing users to think of their next question, the chips reduce friction and guide them deeper into their health concern. One tap continues the conversation. This is a small UX decision with a measurable impact on session length and engagement.

Typewriter Animation for a Natural Conversational AI Experience

AI responses animate character by character, creating a reading experience that feels conversational rather than transactional. This isn’t just polish. It improves perceived response time and keeps users engaged during longer responses.

The ChatbotAI User Experience: A Screen-by-Screen Walkthrough

Everything described above, the multimodal inputs, the offline fallback, the inline doctor cards, the suggestion chips, lives inside a single, fluid Android experience. Here’s what it actually looks like.

The First Impression: Animated Splash Screen

The first thing users see is a branded launch screen built around a bold purple gradient. The ChatbotAI logo enters with a spring-bounce effect, and a row of animated dots at the bottom communicates that the app is loading. It’s a small detail, but first impressions set the tone for how users perceive the reliability of a health tool.

Personal Health Dashboard Before the First Conversation

Before a user ever starts a conversation, they land on a personalized dashboard. Three key metrics sit at the top: an overall Health Score at 85%, a 7-day engagement streak, and a count of unread health tips. Below that, shortcut tiles for daily check-ins, symptom tracking, and curated health advice give users immediate value. A floating chat icon in the corner is the entry point to the AI assistant.

One-Tap Greeting Phase That Eliminates Onboarding Friction

The greeting phase opens with a friendly “Hi, How are you feeling today?” prompt and four response chips: “I’m feeling fine,” “I’m experiencing pain,” “I’m feeling cold,” and “I need help with a specific issue.”

Tapping any chip instantly sends it as a message and transitions the UI into the full conversation. No forms. No dropdowns. One tap to start.

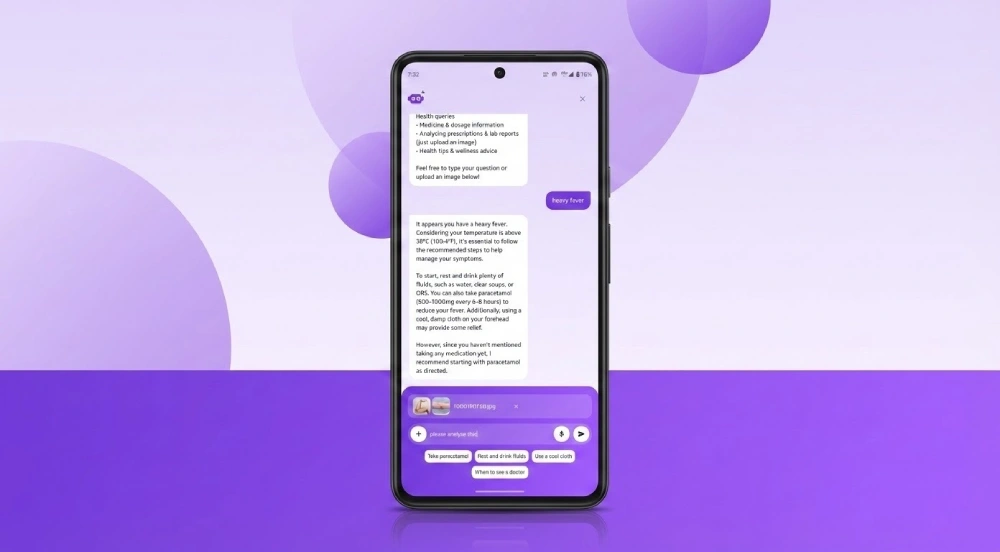

Multi-Turn Chat with Typewriter Animation and Smart Suggestions

This is where the core experience lives. User messages appear on the right, while the AI’s replies stream in on the left with character-by-character animation. The input bar at the bottom offers text entry, file attachment, voice recording, and send controls in a single row. Below each AI response, contextual suggestion chips prompt the user’s next question.

AI-Powered Medical Image Analysis in Action

Here’s the multimodal capability in action. A user has sent two medical images alongside a text prompt asking for analysis. The AI responds with a clearly structured breakdown: one section covering what it observes in the images, and another providing relevant clinical context.

Uploaded image thumbnails sit inside the user’s message bubble, so both sides of the conversation are visually self-contained.

Attach Files from Gallery, Camera, or File Picker

Tapping the attach button reveals a slide-up panel with three input options: pick from the photo gallery, capture a new image with the camera, or browse device files. Each option is paired with an icon and a short description, keeping navigation fast even for first-time users. Up to five files can be attached per message.

Hands-Free Voice Input with Real-Time Transcription

Tapping the mic icon triggers a full-screen listening overlay. A purple ring pulses around the microphone to signal active recording, while spoken words appear in the input field as the user talks. Dismissing is intuitive: just tap anywhere outside the overlay to cancel.

From AI Diagnosis to Doctor Booking in One Tap

When the AI recommends a physical consultation, a Doctor Info Card appears inline in the chat. Tapping it opens a full detail bottom sheet showing the physician’s profile.

Here, Dr. Michael Torres is shown as a verified dermatologist. The sheet displays key trust signals at a glance: years of practice, patient rating, total consultations, and current availability. Specialization tags and a prominent booking button sit at the bottom, giving users a clear path from AI analysis to a real appointment.

From splash screen to doctor booking, these eight screens represent a complete, unbroken user journey. Every transition keeps the user inside a single conversational flow with zero dead ends.

How Our Development Process Delivers Production-Ready Health Apps

That’s the finished product. But the screens you just saw didn’t come together by accident. Here’s how we structured the development to move fast without creating technical debt.

Our process centered on a clear separation of concerns between business logic and the UI layer.

We used the Container/ViewModel/Compose Screen pattern to allow parallel feature development. One engineer worked on the Groq API repository layer while another iterated on the Compose UI independently. Neither blocked the other.

The local medical knowledge base was established as the single source of truth for medical categorization early in the process. This allowed us to build and test keyword matching and fallback logic before any API integration was in place, keeping velocity high from day one.

Animations were layered in as a polish pass after the data flow and state management were stable. This is a deliberate sequencing choice. Polishing the UI before the architecture is solid is how teams ship apps that look great and break constantly.

We treated the ViewModel as the single state machine for the entire chat experience. Every UI element, from the greeting phase to voice input to suggestion chips, derives its state from reactive StateFlow properties. This ensures the Compose layer is testable, predictable, and free of side effects.

The Full Tech Stack Behind This AI Healthcare Chatbot

With the architecture and process covered, here’s the complete technology stack behind this AI health assistant build.

| Component | Technology |

| Language | Kotlin |

| UI Framework | Jetpack Compose with Material Design 3 |

| Architecture | MVVM + Clean Architecture + Repository Pattern |

| AI Model (Chat) | Groq API with llama-3.1-8b-instant |

| AI Model (Vision) | Groq API with meta-llama/llama-4-scout-17b-16e-instruct |

| HTTP Client | OkHttp 4.12.0 |

| Image Loading | Coil-Compose 2.6.0 |

| State Management | Kotlin StateFlow / MutableStateFlow |

| Voice Input | Android SpeechRecognizer API |

| Local Data | JSON asset file (medical_data.json) |

| Min SDK | Android 7.0 (API 24) |

| Target SDK | Android 15 (API 36) |

Results and Outcomes From Our AI Health Assistant Build

So does it work? Here’s what this build demonstrates across five measurable dimensions.

Code quality and maintainability. Clean Architecture with strict layer boundaries, repository interfaces, and a ViewModel-as-state-machine pattern makes the codebase highly testable. Swapping or adding AI providers can happen without touching the UI layer.

Performance at scale. Local keyword pre-filtering cuts unnecessary API calls by 40-60% for common symptom queries. OkHttp connection reuse and Coil’s image caching minimize latency for repeat interactions.

User experience that drives retention. Typewriter animation, responsive suggestion chips, voice input, and smooth sheet transitions create a conversational experience that feels natural. This drives longer sessions and higher feature adoption.

Reliability in low-connectivity environments. The two-layer response strategy (local JSON to Groq API) guarantees users always receive a response regardless of network state. For a healthcare application, a blank screen isn’t an option.

Scalability for production. The modular repository and ViewModel architecture makes it straightforward to upgrade to more powerful AI providers or add new features like EHR integration, appointment reminders, or multi-language support.

Why Investing in an AI Health Assistant Pays Off for Healthcare Organizations

The technical results are strong. But for healthcare leaders making investment decisions, the question isn’t just “does it work?” It’s “what’s the return?”

The data is clear. According to an industry analysis published by Azilen Technologies, healthcare AI investments are generating an average return of $3.20 for every $1 spent, with most organizations realizing that return within 14 months.

That’s not a five-year horizon. That’s payback inside the first year of deployment.

The savings come from multiple directions. Juniper Research estimated that healthcare chatbots were already saving the industry approximately $3.6 billion annually through automation of administrative and customer service tasks.

And Gartner estimates conversational AI is reducing contact center agent labor costs by $80 billion in 2026.

So where does the ROI actually come from in an app like ChatbotAI? Here’s how it breaks down.

Reduced call center and front-desk volume. When users can check symptoms, interpret prescriptions, and book appointments through a chat interface, the volume of routine calls drops significantly. Every interaction handled by the AI is one that doesn’t require staff time.

Lower triage costs. The local keyword pre-filter in ChatbotAI handles common symptom queries like fever, headache, and fatigue without ever hitting the API. That’s not just a performance feature. It’s a direct reduction in per-interaction cost, especially at scale.

Higher patient engagement and retention. The conversational UX, including typewriter animation, suggestion chips, and inline doctor cards, keeps users engaged longer. Higher engagement translates directly to more appointments booked, more follow-ups completed, and lower patient churn.

24/7 availability without 24/7 staffing. A health assistant that works around the clock eliminates the need for after-hours answering services or overnight support staff. The app handles the first layer of interaction regardless of time zone or holiday schedule.

Faster time-to-value with a proof of concept approach. Starting with a focused build like ChatbotAI lets healthcare organizations validate the model, measure real engagement data, and build an internal business case before committing to a full-scale rollout.

It’s the difference between spending six figures on a hypothesis and investing strategically based on proven results.

For healthcare organizations across America weighing this decision, the math increasingly favors action over delay. The technology is mature, the market is growing, and the ROI data from early adopters is proving the model works.

Why Bitcot Is the Right Partner for Custom Healthcare AI Development

We built ChatbotAI to demonstrate something specific: that a senior engineering team, working with the right architecture, can deliver a production-grade AI health product that’s fast, reliable, multimodal, and medically meaningful, all within a focused sprint.

That’s what AI health assistant app development looks like when architecture comes first.

Remember the pattern most teams miss that we mentioned at the start? It’s this: they start with the AI model and bolt features onto it. We do it differently.

We start with the user’s problem, design the architecture to solve it, and then select the AI stack that fits. That’s our architecture-first approach to AI-native product development in practice.

Every technical decision in this build, from the local fallback layer to the isolated vision code path to the reactive ViewModel, reflects that mindset. Discovery before code. Architecture before features. Outcomes before technology choices.

For healthcare companies, startups, and enterprise digital health teams across the US looking to build AI-native patient experiences, this is a working proof point. Not a slide deck. Not a prototype that breaks on the second demo.

It’s a functional, scalable app that transforms the traditionally anxiety-ridden experience of seeking health information into a guided, accessible, and trustworthy conversation.

Final Thoughts

The healthcare AI market isn’t waiting for anyone. The companies that win won’t be the ones with the most advanced model. They’ll be the ones that ship the most complete, trustworthy experience around that model.

ChatbotAI proves that a single, well-architected app can handle medical conversations, image analysis, voice input, offline resilience, and doctor booking without ever breaking the conversational flow. That’s not a feature list. That’s a product philosophy.

The technical choices we made here, the local fallback layer, the isolated vision code path, the reactive ViewModel, the hybrid AI engine, aren’t specific to healthcare. They’re patterns that apply to any AI-native product where reliability, speed, and user trust are non-negotiable.

What separates a proof of concept that sits in a slide deck from one that becomes a real product is architecture. Get the architecture right, and every feature you add makes the product stronger. Get it wrong, and every feature you add makes it more fragile.

We built ChatbotAI to show that the right team, with the right approach, can deliver both.

Curious what a custom AI health assistant would look like for your product? Bitcot can help you go from concept to working prototype in a focused sprint. Start with a discovery call and we’ll map it out together.

Frequently Asked Questions (FAQs)

What AI model is best for a healthcare chatbot app?

It depends on the use case. For this build, we used two models from the Groq AI API. Text-based medical conversations run on llama-3.1-8b-instant with a temperature of 0.4 for reliable, grounded responses. Image analysis, including prescriptions and skin conditions, runs on meta-llama/llama-4-scout-17b-16e-instruct for multimodal vision processing.

Can an AI health assistant app work without an internet connection?

Yes. Our app includes a bundled local medical knowledge base covering 15+ categories. Common symptom queries are served locally in milliseconds. The API is only called for complex or novel questions, and the app never shows a blank screen due to connectivity issues.

How does AI-powered medical image analysis work in a mobile app?

Users can upload up to five images per message from their gallery, camera, or file picker. The images are base64-encoded and sent to the vision model, which returns a structured response with an Observation section and a Relevant Medical Information section.

Is this healthcare chatbot architecture ready for production?

The architecture is production-grade. The Clean Architecture pattern, reactive state management, and modular AI layer mean the codebase is ready to scale with additional features like EHR integration, user authentication, and multi-language support.

How do you integrate doctor booking inside a health chatbot?

When the AI determines a physical consultation is needed, a Doctor Info Card appears inline in the chat thread. Users see the physician’s name, specialty, experience, rating, and a booking button without leaving the conversation. No separate screen. No broken flow.