Key Takeaways:

- Enterprise DevOps is a cultural and organizational transformation first – tooling decisions come second, not first.

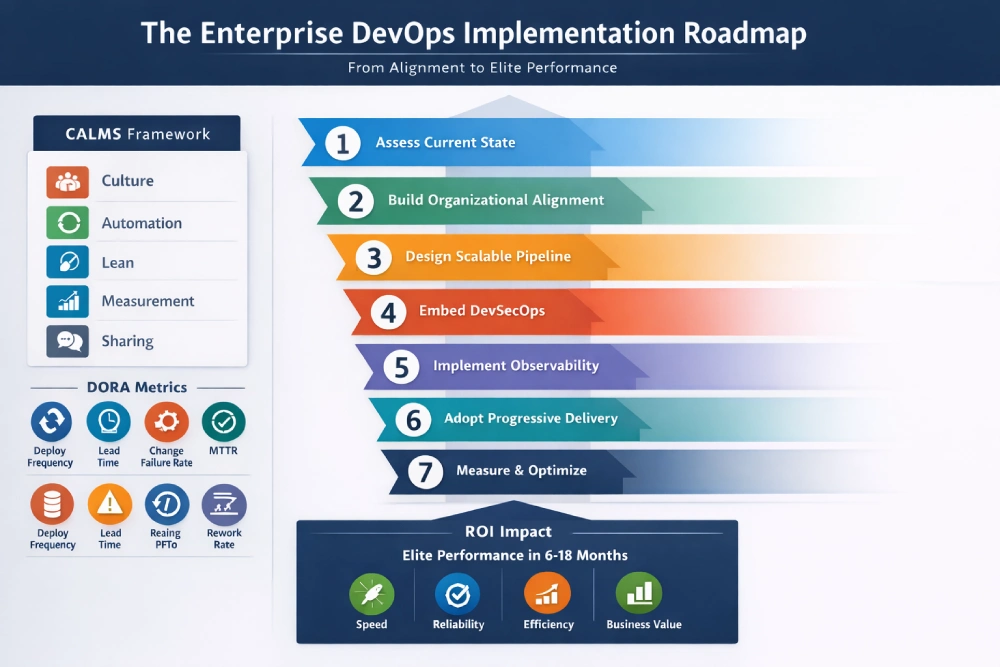

- The CALMS framework (Culture, Automation, Lean, Measurement, Sharing) is the operating model that makes the toolchain meaningful at scale.

- A seven-step implementation roadmap – sequenced from organizational alignment through platform engineering maturity – shows exactly where to start and in what order.

- DevSecOps, full-stack observability, and progressive delivery are the three capabilities most consistently missing from failed enterprise transformations.

- DORA metrics (deployment frequency, lead time for changes, change failure rate, failed deployment recovery time, and rework rate) are the most reliable organizational health indicators available – and should be baselined before any tooling decision is made.

- ROI is measurable: elite DevOps organizations consistently outperform on delivery velocity, reliability, engineering efficiency, and business outcomes within 12 months of disciplined implementation.

Your Delivery Pipeline Is a Competitive Liability – and the Clock Is Running

Dev throws code over the wall to Ops. Security reviews happen the week before launch. QA sits in its own silo. And every sprint, the friction compounds quietly – until it doesn’t, and a release becomes a crisis.

This isn’t a technology failure. It’s an organizational inheritance problem – one that has quietly turned the software delivery lifecycle into a source of compounding risk rather than compounding advantage. And it traces back, oddly enough, to Henry Ford.

In the 1920s, Ford divided his workforce into layers of functional specialists – engineers here, operations there, quality control somewhere else entirely. The model was built for manufacturing efficiency. Alfred Sloan scaled it into the template for the modern corporation.

And one hundred years later, most enterprise engineering organizations are still running a version of that same structure without realizing it.

Enterprise DevOps is how you finally break out of it.

According to the DORA State of DevOps research program – based on over a decade of data from tens of thousands of professionals globally – elite-performing organizations deploy on demand, often multiple times per day, while low performers ship monthly or quarterly.

Elite teams also maintain change failure rates up to eight times lower. That gap is not explained by budget. It’s explained by delivery architecture: the systems, culture, and execution discipline that either compound your engineering advantage or quietly erode it.

This guide is written for CTOs, VPs of Engineering, and technical co-founders at scaling enterprises – teams operating distributed infrastructure, managing regulatory complexity, and carrying real delivery risk every sprint. If you’re looking for a beginner checklist, this isn’t it.

If you’re ready to build a delivery system that holds under pressure and grows more reliable as you scale, read on – starting with what enterprise DevOps actually means at this scale, and why it behaves differently than anything you’ve read about smaller teams.

What Is Enterprise DevOps and How Is It Different from Traditional DevOps?

Enterprise DevOps is not a tool purchase. It’s not a rebranding of your Jenkins setup. And it is definitely not the same as the DevOps that works cleanly inside a 15-person startup.

Enterprise DevOps is the organizational and technical unification of software delivery – connecting development, security, operations, and business outcomes into a single, observable, continuously improving system – across distributed teams, complex infrastructure, legacy constraints, and governance requirements that simply don’t exist at smaller scale.

One of the most persistent sources of confusion at the leadership level is treating CI/CD and DevOps as the same thing. They are not – and understanding the relationship between CI/CD and DevOps is foundational before any tooling decisions are made. DevOps is the cultural and organizational operating model.

CI/CD is the technical delivery mechanism that expresses that model in code.

The cultural operating model underpinning enterprise DevOps is called CALMS: Culture, Automation, Lean, Measurement, and Sharing. It was formalized by DevOps practitioners as a maturity framework precisely because the toolchain is meaningless without the organizational operating principles behind it.

Culture creates shared accountability across Dev, Ops, and Security. Automation removes toil and human error from repeatable processes. Lean thinking eliminates waste in the delivery pipeline. Measurement drives decisions with real data rather than instinct.

Sharing prevents knowledge from siloing back into functional teams the moment the transformation effort loses momentum.

“In high-performing organizations, everyone within the team shares a common goal – quality, availability, and security aren’t the responsibility of individual departments, but are a part of everyone’s job, every day.”

– Gene Kim, Co-author, The DevOps Handbook & The Phoenix Project

Every enterprise DevOps implementation that fails does so because one or more of these pillars was treated as optional. The cost of that failure is not abstract – it shows up in downtime, attrition, and lost competitive ground, often before leadership connects the dots.

What Does a Failed DevOps Transformation Actually Cost an Enterprise?

That cost has a number – and it’s rarely discussed honestly before a transformation begins.

For years, the IT industry cited enterprise downtime costs of $5,600 per minute as gospel. EMA Research (2024) has since shown that figure was never based on formal research. Their independent field study puts the real average at over $14,000 per minute, with large enterprises in finance, healthcare, and e-commerce reporting costs well above that figure. That’s the direct cost.

The indirect cost – developer attrition, accumulated technical debt, slowed feature velocity, lost market share – is typically three to five times larger and far harder to attribute on a P&L.

Research consistently shows that engineering teams at organizations with immature delivery practices spend between 40% and 50% of their time on unplanned work and rework rather than building forward progress. For a team with a $10M annual engineering payroll, that’s $4M to $5M per year producing zero competitive output.

For a funded enterprise carrying Series A or B capital, this isn’t an operational efficiency problem. It’s a capital allocation crisis.

The villain in this story isn’t your toolchain. It’s delivery risk – the compounding, invisible kind that erodes customer trust, burns runway, and turns every release night into a war room exercise. The roadmap below is how you dismantle it, step by step.

The Enterprise DevOps Implementation Roadmap

Knowing the cost of inaction is only useful if you have a clear path forward. Most organizations understand what DevOps is in theory.

The failure point is almost always execution sequencing – doing the right things in the wrong order, or skipping foundational steps because they feel slow compared to the excitement of standing up new tooling.

The roadmap below is sequenced deliberately. Each step builds on the last. Organizational alignment before architecture. Architecture before security embedding. Observability before progressive delivery.

There is a compounding logic to this order that reflects how real, durable transformations actually happen inside large organizations.

What you will not find here is a promise of overnight transformation. What you will find is a sequenced, senior-level execution path that takes an enterprise from delivery fragility to measurable, elite-tier performance – with the ROI case for every step built in.

Step 1 – Assess Your Current State Before Building Anything

Before any tool is evaluated or any team is restructured, you need a clear-eyed view of where you actually stand. Every enterprise DevOps transformation that succeeds starts with an honest baseline – not a tool audit, but a delivery capability audit.

You need to understand where your organization sits across the five DORA metrics: deployment frequency, lead time for changes, change failure rate, failed deployment recovery time, and rework rate. These are not vanity metrics.

They are leading indicators of delivery system health and the closest thing the industry has to an objective organizational health check.

Before you make a single tooling decision, map your current DORA performance against its established performance tiers. If your change failure rate is above 15% or your recovery time after failed deployments is measured in hours rather than minutes, the problem is structural, not instrumental.

Buying a new CI/CD tool won’t fix it.

What you’re typically going to surface at this assessment stage falls into three categories. Pipeline fragility – builds that break frequently, manual approval gates that exist for bureaucratic rather than governance reasons, and no real-time visibility into deployment status.

Environment drift – dev, staging, and production environments that don’t behave consistently, producing the classic “works on my machine” failure pattern at a scale that affects every release.

And security bolted on at the end – penetration testing happening the week before a major release instead of being embedded across the pipeline.

Most organizations find at least two of these three. Usually all three.

Also quantify the dollar cost of your current MTTR at this stage. If a production incident takes your team four hours to resolve and your platform handles $100,000 per hour in transaction volume, your MTTR cost is immediately visible and defensible as a budget case for transformation investment.

Document everything from this assessment. It becomes the brief that prevents wasted effort in the first six months.

Step 2 – Build Organizational Alignment Before You Touch Tooling

This is where most enterprise DevOps implementations die quietly.

Teams invest in Kubernetes. They stand up GitHub Actions pipelines. They buy Datadog. And six months later, nothing has fundamentally changed because the organizational change management was treated as secondary – something to deal with after the tools were in place.

It doesn’t work that way. DevOps is a cultural operating model first. Tools are expressions of that model, not the model itself.

Organizational alignment in practice means three things.

First, a genuine executive sponsor – not a supportive one who attends quarterly reviews, but someone who owns outcomes, can break ties when Dev and Ops priorities conflict, and will defend the transformation budget when the first rough quarter hits.

Second, shared KPIs between engineering, security, and products that make “fast” and “stable” complementary goals rather than competing ones.

Third, a DevOps Center of Excellence – a small, senior team responsible for setting standards, governing toolchain decisions, coaching squads through the transition, and actively reducing friction in the inner dev loop so that developer experience (DevEx) doesn’t degrade under the weight of organizational change.

The team structure underneath the CoE should follow the Team Topologies model developed by Matthew Skelton and Manuel Pais. It defines four team types specifically relevant to enterprise-scale DevOps: Stream-aligned teams that own end-to-end delivery of a specific product or service,

Platform teams that build and maintain the internal developer platform that other teams consume, Enabling teams that work temporarily alongside stream-aligned teams to build missing capabilities, and Complicated Subsystem teams that own components requiring deep specialist expertise.

Getting this structure right before the toolchain is built prevents the permanent coordination overhead that kills enterprise DevOps initiatives at scale.

Organizations like Capital One and Spotify have used variants of this model explicitly to manage DevOps at enterprise scale without recreating the silos they were trying to dismantle.

With people, structure, and sponsorship locked in, the next step is where most teams want to start – and where starting too early causes the most damage.

Also Read: DevOps Engineer Vs DevOps Consultant, Know The Difference

Step 3 – Design Your Pipeline Architecture for Enterprise Scale

The pipeline architecture is where strategy becomes concrete. With alignment established, the delivery system can be designed for your actual scale – not a generic template, but infrastructure that reflects your team topology, your regulatory environment, and your current infrastructure constraints.

For a deeper look at how enterprises evaluate and select tooling at this stage, our guide to enterprise CI/CD tools covers the full decision framework.

Source control and branching strategy comes first. Trunk-based development outperforms GitFlow for teams optimizing deployment frequency. The key enabler at enterprise scale is feature flags – tools like LaunchDarkly or Unleash allow incomplete features to live in the trunk without being visible to end users, eliminating the branch complexity that GitFlow creates and the merge conflicts that slow large teams down.

With branching resolved, the next layer is continuous integration and deployment automation. Every commit should trigger an automated build and test cycle. GitHub Actions, GitLab CI, and CircleCI all handle this at enterprise scale.

The architectural priority is feedback loop speed – a CI pipeline that takes 45 minutes is a productivity tax that compounds across hundreds of daily commits. Elite teams target sub-10-minute CI feedback through parallel test execution and build caching.

For the techniques that make this achievable in practice, see our detailed breakdown of techniques to reduce CI feedback loop time.

Here is how the leading enterprise CI/CD platforms compare on the dimensions that matter most to technical decision-makers:

| Platform | Enterprise Security | Native Kubernetes | Pricing Model | Self-Hosted |

| GitHub Actions | OIDC, secret scanning, audit log | Good (via Actions) | Per-minute compute | GitHub Enterprise Server |

| GitLab CI | Built-in SAST/DAST, compliance pipelines | Excellent (built-in) | Seat-based | GitLab Self-Managed |

| CircleCI | Audit log, RBAC, IP ranges | Good (orbs) | Per-compute | CircleCI Server |

| Jenkins | Plugin-dependent | Plugin-dependent | Free (infra cost) | Self-hosted only |

Once your CI platform is chosen, the delivery layer follows. For continuous delivery and cloud-native infrastructure modernization, Kubernetes managed via AWS EKS, Google GKE, or Azure AKS is the enterprise standard for containerized workloads.

Docker containers package application code with all dependencies into portable, consistent units that eliminate environment drift across dev, staging, and production. Helm charts manage configuration and release versioning.

ArgoCD or Flux handle GitOps-based deployments, where infrastructure state is declared in version-controlled repositories and continuously reconciled – removing manual CLI commands from the deployment process entirely.

Underneath all of this sits infrastructure as code. Terraform remains the dominant tool for multi-cloud enterprise environments. Pulumi is gaining traction for teams that prefer writing infrastructure definitions in TypeScript, Python, or Go rather than HCL.

Either way, infrastructure should be version-controlled, peer-reviewed through pull requests, and deployed through the same pipeline as application code – never managed by individuals with direct console access.

With the pipeline architecture in place, there is one layer that most teams add too late and pay for too dearly.

Also Read: Cloud-Native Application Development: Challenges, Solutions & Costs

Step 4 – Embed Security as a First-Class Citizen

Calling this step “DevSecOps” risks making it sound like a separate initiative. It isn’t. Security in a mature DevOps pipeline is not a gate – it’s a continuous property of the pipeline itself.

Shifting security left – or more precisely, implementing shift-left testing across both functional and security validation – means embedding automated checks at every stage from commit to deployment.

Static Application Security Testing tools like Checkmarx or Semgrep scan code on every commit, before human review even begins. Software Composition Analysis tools like Snyk continuously audit open-source dependencies for known CVEs – given that the average enterprise application has 40 to 60% open-source components, this is not optional.

Container scanning tools like Trivy or Grype check images before they reach the registry. All of this sits alongside automated quality engineering that ensures functional correctness and security hardening move through the pipeline together rather than in separate lanes.

Secrets management must be centralized. HashiCorp Vault or AWS Secrets Manager handles this at enterprise scale. Secrets in environment variables or source control – even private repositories – represent a category of risk that has caused public security incidents at organizations that should have known better.

For enterprises under SOC 2, HIPAA, or ISO 27001 frameworks, this architecture enables continuous compliance – turning what was a quarterly manual scramble into a continuous, automated, auditable property of every deployment.

The compliance audit doesn’t change your deployment schedule because compliance is embedded in every deployment. IBM’s 2025 Cost of a Data Breach Report puts the average enterprise breach cost at $4.44 million.

The entire annual cost of a comprehensive DevSecOps toolchain at enterprise scale is typically less than 5% of that number.

Security without visibility, however, is only half the equation. Once the pipeline is protected, you need to be able to see inside it in real time.

Also Read: Top 15 Software Development Trends 2026

Step 5 – Build Full-Stack Observability Into the System

Monitoring tells you something is broken. Observability tells you why – specifically, which service, which dependency, which change, and what the blast radius is.

That distinction matters more than most teams appreciate until they’re debugging a production incident at 2am with logs that tell them nothing useful.

“Our research has consistently shown that speed and stability are outcomes that enable each other. High-performing teams don’t trade off between them – they achieve both simultaneously through strong technical practices and a healthy culture.”

– Nicole Forsgren, PhD, Co-author, Accelerate: The Science of Lean Software and DevOps

In a distributed, microservices-based enterprise architecture, you need signal coverage across three types: metrics – quantitative measurements of system behavior, logs – discrete records of events, and traces – end-to-end request journeys across service boundaries.

Alongside mean time to recovery (MTTR), tracking mean time to detect (MTTD) is equally important – because a 30-minute recovery that took four hours to detect is still a four-and-a-half-hour incident from the customer’s perspective.

The OpenTelemetry standard has become the industry’s vendor-neutral instrumentation layer, supporting auto-instrumentation for most major languages and frameworks and preventing observability vendor lock-in.

For the data platform layer, the Grafana stack – Prometheus for metrics, Loki for logs, Tempo for traces – is the dominant open-source choice. For enterprises preferring managed solutions, Datadog and New Relic offer comprehensive observability with strong Kubernetes integration and lower operational overhead.

The most important architectural decision here is not which tool to use. It’s ensuring that observability is instrumented from day one of service development, not retrofitted after a production incident.

Teams that instrument late spend 60 to 90 days chasing observability gaps after every major incident rather than fixing root causes.

Practically, if your team cannot answer “what is our P95 API response time over the last 30 days, broken down by service?” in under 60 seconds, your observability posture needs immediate attention.

SRE teams at mature organizations use this data to define and track Service Level Objectives – customer-facing reliability commitments that connect engineering performance directly to business trust and commercial outcomes. Once observability is solid, you have the telemetry foundation needed to do the next step safely.

Also Read: Legacy System Modernization and Migration: Key Strategies, Services, and Costs

Step 6 – Implement Progressive Delivery to Contain Release Risk

With a secure, observable pipeline in place, you can now deploy with genuine confidence – and progressive delivery is where release engineering discipline becomes your competitive advantage. Progressive delivery means releasing changes to a controlled subset of users before broad rollout.

It separates deployment from release, which is one of the most powerful architectural distinctions in modern software delivery.

Canary deployments route a small percentage of traffic – typically 1 to 5% – to a new version while the majority stays on the current stable version.

Feature flags via tools like LaunchDarkly or Unleash allow you to decouple deployment from release entirely: code ships to production but activates only for specific user segments, internal testers, or percentage rollouts under your control.

Blue-green deployments maintain two identical production environments and switch traffic at the load balancer, enabling instant rollback without redeployment.

Tools like Flagger can automate canary analysis. If error rate or latency metrics degrade beyond a defined threshold as the canary percentage increases, the rollout is automatically halted and rolled back without a human having to be awake to catch it.

For enterprise platforms handling significant transaction volumes or large user bases, this is how you eliminate release night war rooms. Releases become routine, reversible, and measurable – not existential events that the entire engineering leadership monitors with anxiety.

Step 7 – Measure, Iterate, and Build Toward Platform Engineering Maturity

Eliminating release anxiety is a milestone, not a finish line. What comes next is building the measurement discipline that turns a one-time transformation into a permanent competitive advantage.

DevOps maturity is not a destination. It’s a compounding improvement system that rewards disciplined, consistent measurement over time.

Establish a quarterly review cycle where you reassess your DORA metrics, review blameless post-mortem culture outputs from production incidents, and evaluate your toolchain against emerging options.

Define your maturity targets explicitly – moving from Low to Medium DORA performance typically takes 6 to 12 months of focused execution. Moving from Medium to Elite is an 18 to 36-month organizational journey depending on legacy system complexity and team scale.

For a detailed framework on measuring DevOps ROI across all four delivery dimensions, Bitcot’s published methodology covers each metric tier with specific calculation models.

DORA’s own research shows that high-performing software delivery teams are consistently more likely to exceed their organizational performance goals on revenue, profitability, and market share.

Once measurement is embedded as a habit, the natural evolution leads somewhere most organizations don’t anticipate – platform engineering.

Where DevOps asks “how do we build better pipelines?”, platform engineering asks “how do we build an internal developer platform that makes every engineering team faster by default?”

Platform teams build self-service infrastructure provisioning, golden path templates, and internal developer portals using frameworks like Spotify’s Backstage, which reduce cognitive load on product engineering teams while maintaining governance and security standards centrally.

Value stream management (VSM) gives leadership visibility into end-to-end delivery flow – from idea to production – and is the natural complement to platform engineering at High and Elite DORA tiers.

Track engineering velocity metrics alongside DORA throughout this journey: unplanned work ratio, sprint velocity trends, and deployment lead time give you a comprehensive delivery health picture that finance and product leadership can engage with directly – not just engineering.

How Bitcot Helps Enterprise Organizations Implement DevOps

Getting the roadmap right is one thing. Executing it inside a real organization, with real constraints, under real delivery pressure, is another – and that is where the right partner changes the outcome.

“Most enterprises don’t have a technology problem. They have a delivery system problem. When we fix how software moves from idea to production, everything else gets easier – the speed, the trust, the competitive position.”

– Raj Sanghvi, Founder & CEO, Bitcot

Bitcot’s DevOps consulting services are built for CTOs and technical founders who are past the exploration phase. Our clients are typically mid-to-large SaaS companies, fintech platforms, and healthcare technology organizations operating distributed teams and complex infrastructure.

They need a partner who can design, build, and transfer ownership of a delivery system without a 12-month integration cycle and without creating a permanent external dependency – and the organizations that get it right do so with partners who’ve already solved the specific problems they’re about to face.

You can explore our track record across automation case studies to see how we’ve applied these principles across industries.

Our engagement model is built around three principles.

Assessment before architecture. We do not propose tooling before understanding your current DORA baseline, team structure, and regulatory context. Every recommendation is grounded in your specific constraints – not a template applied from another engagement.

Senior-to-senior collaboration. Your CTO and VP of Engineering work directly with our principal architects and DevOps leads – not account managers running a playbook. The people responsible for delivery decisions are in the room together from day one.

Knowledge transfer by design. Our goal is to build your internal capability, not extend your dependency on us. Every engagement includes documentation, training, and defined handover milestones. When the engagement ends, your team owns the system fully.

If you are evaluating a DevOps transformation and your current vendor is leading with a tool recommendation before they have understood your architecture, treat that as a serious warning sign.

The Cost of Delay Is Measurable – and Growing

You now have the full picture – the framework, the sequenced steps, the financial case, and the answers to the questions that slow most organizations down. What remains is a decision about timing.

Every sprint that runs through a fragile pipeline is a sprint that compounds your delivery risk. Every release that requires a manual war room is an incident waiting to happen. Every quarter that passes without DORA measurement is a quarter of optimization opportunity lost permanently.

This isn’t theoretical anymore. The data exists. The benchmarks are published. The gap between elite and low-performing DevOps organizations is quantified, documented, and growing every year.

Enterprise DevOps transformation is not a project with a defined end state. It is an ongoing operating capability that either gives you a measurable, compounding competitive advantage or leaves you vulnerable to organizations that have already built it.

The engineering teams moving fastest right now are not doing so because they found a better tool. They made a deliberate architectural decision, executed it with senior engineering talent, built systems designed for continuous improvement, and measured outcomes relentlessly against meaningful benchmarks.

If you are leading an enterprise engineering organization and ready to move from diagnosis to execution, Bitcot’s technical team can begin with a DevOps Readiness Audit.

That means a structured assessment of your current delivery architecture, your DORA baseline, your regulatory constraints, and a prioritized implementation roadmap built for your specific environment.

No template decks. No generic recommendations. Senior engineering expertise applied to your actual system.

Ready to build a delivery system that scales with your business? Let’s start with a strategic consultation.

Frequently Asked Questions (FAQs)

The roadmap above covers the what and the how. These questions address the decisions that technical leaders most commonly face when translating that roadmap into an organizational commitment.

How long does a full enterprise DevOps implementation realistically take?

A foundational implementation – pipeline architecture, IaC setup, observability, and team alignment – typically takes 3 to 6 months for a focused organization with genuine leadership commitment. Reaching Medium DORA performance takes 6 to 12 months.

Reaching Elite performance is an 18 to 36-month organizational journey depending on legacy system complexity and team size. Organizations that rush this process create technical debt faster than they eliminate it.

The question to ask is not “how fast can we implement DevOps?” but “what is the cost of each additional month of delay?”

What is a realistic total cost of ownership for an enterprise DevOps stack?

TCO depends on scale, team size, cloud provider, and whether you build on open-source or managed services. A mid-market enterprise of 200 to 500 engineers running managed Kubernetes, a modern CI/CD platform, enterprise observability,

and secrets management can expect infrastructure and tooling costs in the $120,000 to $300,000 annual range. Engineering labor to build and maintain the platform is typically the dominant cost – often 3 to 5 times the tooling spend.

The more financially relevant question is what your current deployment risk, incident costs, and developer productivity losses are costing per quarter compared to that investment.

How do we implement DevOps in a heavily regulated industry?

Regulated environments – HIPAA, SOC 2, PCI-DSS, ISO 27001 – require DevSecOps architecture from day one, not retrofitted later. This means continuous compliance built directly into the pipeline: audit-ready automated checks in CI, role-based access controls, centralized secrets management,

and container image scanning before deployment. Done correctly, compliance becomes a continuous, automated, auditable property of every deployment rather than a resource-intensive quarterly event. Organizations that design for compliance from the beginning spend significantly less time and money than those who retrofit it.

Should we build our DevOps capability in-house or work with an external partner?

The answer for most enterprises is both, in sequence. External partners with genuine senior-level DevOps expertise accelerate architectural design and initial implementation significantly – they have solved the specific failure modes you are about to encounter, multiple times.

Internal teams own long-term operations, continuous improvement, and organizational knowledge. Enterprises that outsource DevOps entirely create permanent vendor dependency. Enterprises that build it entirely internally from scratch typically lose 12 to 18 months to avoidable mistakes.

The right partnership model builds internal capability during the engagement, not after it ends.

How do we manage DevOps across globally distributed engineering teams?

Distributed DevOps requires investment in asynchronous tooling and explicit process documentation. GitOps is particularly well-suited to distributed teams because infrastructure state is always declared in version-controlled repositories

– any team member in any timezone can see the current state of the system and understand how any change was made and by whom. On-call rotations, incident response runbooks, and escalation procedures need to be documented and systemized rather than held in individual engineers’ heads.

Async-first communication combined with clear process ownership prevents the coordination gaps that cause incidents in distributed environments.

What is the relationship between DevOps and platform engineering?

Platform engineering is the organizational evolution that high-maturity DevOps teams reach naturally, typically at the Medium-to-High DORA performance transition. Where DevOps asks “how do we build better delivery pipelines?”,

platform engineering asks “how do we build an internal developer platform that makes every product team faster by default, without them having to think about infrastructure?” Platform teams use frameworks like Backstage from Spotify to build internal developer portals with self-service infrastructure, golden path templates,

and service catalogs. Most enterprises should not target platform engineering on day one – it’s the right destination after foundational DevOps practices are mature and stable.

How do we measure ROI on a DevOps transformation investment?

Measure across four dimensions simultaneously. Delivery velocity – lead time for changes and deployment frequency directly correlate with revenue-generating feature output. Reliability – MTTR and change failure rate determine the operational cost of incidents.

Engineering efficiency – the ratio of planned to unplanned work determines how much of your payroll is producing forward progress versus fighting fires. Business outcomes – release-to-revenue cycle time and customer-facing uptime connect engineering performance to commercial results.

Organizations that track all four dimensions report 20 to 30% reductions in time-to-market and 40 to 60% reductions in production incident frequency within 12 months of disciplined implementation.