Key Takeaways

- AI diagnostic models match specialist accuracy for specific imaging tasks but only with curated, diverse training data.

- Predictive analytics reduces 30-day hospital readmissions by up to 20% when integrated with EHR workflows.

- Remote patient monitoring combined with machine learning detects deterioration 6-24 hours earlier than manual review.

- Mental health apps using NLP achieve measurable therapeutic outcomes in peer-reviewed trials, but efficacy depends on clinical supervision design.

- Security and compliance must be architecture decisions made at the start of development; retrofitting them is expensive and unreliable.

- Bitcot has shipped healthcare platforms, including telemedicine systems, patient portals, RPM dashboards, and EHR integrations for clients across the US.

Introduction

A radiologist reviewing 20,000 chest X-rays in a year is performing one of the highest-stakes pattern-recognition tasks in medicine. They are also doing it under time pressure, with fatigue as a constant variable. An AI model trained on millions of annotated scans does not get tired. It does not get distracted at hour nine of a shift. And in specific, well-defined imaging tasks, detecting diabetic retinopathy, classifying skin lesions, and flagging pulmonary nodules, it is increasingly performing at a specialist level.

That is not a hypothesis. It is a finding from peer-reviewed research published in Nature Medicine, The Lancet Digital Health, and the New England Journal of Medicine. And it is only one thread in what is becoming a fundamental restructuring of how healthcare is delivered, managed, and experienced.

Artificial intelligence in healthcare is no longer the domain of research labs and well-funded university hospitals. It is appearing in primary care clinics, mental health apps used by millions of patients, remote monitoring platforms managing chronic disease at a population scale, and telemedicine systems that connect patients to clinicians in under two minutes. The question for healthcare organisations in 2026 is not whether to adopt AI; it is how to do it without compromising patient safety, violating privacy regulations, or building something that works in demos but fails in production.

This guide covers the real state of healthcare AI: what it can and cannot do, where the evidence is strongest, the implementation decisions that determine whether deployments succeed, and how Bitcot’s engineering team builds AI-powered healthcare software for clients building the next generation of patient care.

Sources: Grand View Research (2024), Google Health / Verily (Nature Medicine), NHS AI Lab readmission study (2023), NEJM Catalyst (2024)

AI in Medical Diagnosis: What the Evidence Actually Shows

Medical diagnosis is the most visible and most scrutinised application of AI in healthcare. The performance claims are genuine, but so are the limitations, and understanding both is essential for any organisation evaluating diagnostic AI.

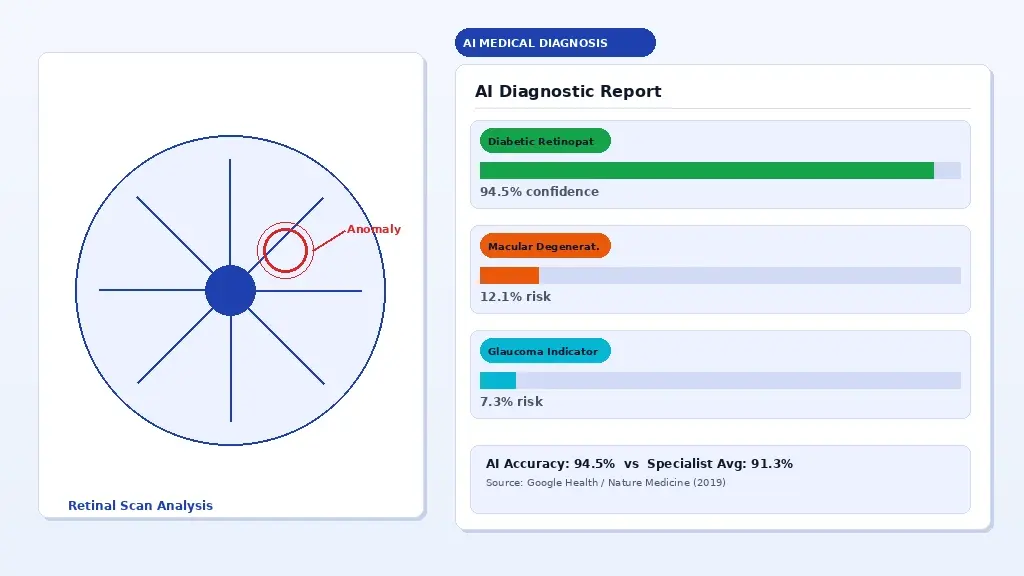

AI-assisted diagnostic imaging: machine learning models flagging anomalies in retinal scans for clinician review.

Where AI Diagnostic Models Are Performing at a Specialist Level

The strongest evidence for AI diagnostic accuracy sits in imaging-based specialities where the task can be precisely defined. A 2019 study published in Nature Medicine demonstrated that Google Health’s deep learning system detected diabetic retinopathy from retinal photographs with 94.5% sensitivity, compared to an average of 91.3% for ophthalmologists. A 2020 study in The Lancet Digital Health showed AI classifying skin lesions at a level comparable to 58 international dermatologists across 100,000 images.

Radiology has seen some of the most rapid clinical adoption. AI systems from vendors including Aidoc and Zebra Medical Vision are already integrated into radiology workflows at major health systems in the US and Europe, flagging critical findings, such as intracranial haemorrhage, pulmonary embolism, and pneumothorax for immediate clinician review. These systems do not replace the radiologist. They prioritise the worklist so the most urgent cases are reviewed first.

Pathology is a growing frontier. PathAI and Paige.AI have developed models for prostate cancer and breast cancer detection from digital pathology slides. The FDA cleared Paige Prostate in 2021, the first AI product to receive FDA authorisation in computational pathology.

The Limitations That Every Healthcare AI Deployment Must Acknowledge

Accuracy figures from benchmark studies do not always translate cleanly to real-world deployment. Three problems appear repeatedly in post-deployment analyses.

First, training data distribution matters enormously. A model trained predominantly on images from high-resolution digital scanners may perform significantly worse on images from older equipment used in community health settings. A dermatology model trained on lighter skin tones will generalise poorly to darker skin tones, a well-documented failure mode highlighted in research by Adamson and Smith in JAMA Dermatology and in the broader algorithmic bias literature.

Second, AI models degrade when clinical context shifts. A pneumonia detection model validated during normal patient populations may behave differently during a respiratory disease surge when prevalence and presentation patterns change.

Third, integration with clinical workflow is where most deployments fail or succeed. A model that achieves 97% accuracy in isolation but adds friction to the clinical workflow will either be ignored or used incorrectly. Deployment requires co-design with the clinicians who will actually use it.

Regulatory Landscape: FDA, CE Marking, and What “Cleared” Actually Means

Not all AI-powered healthcare software requires FDA clearance in the United States. The FDA distinguishes between clinical decision support (CDS) software that is intended to support not replace, clinician decision-making and software that qualifies as a medical device. The FDA’s 2022 updated guidance on CDS software provides a framework, but the boundary cases require careful legal review.

In the EU, the Medical Device Regulation (MDR 2017/745) and the forthcoming EU AI Act create overlapping compliance requirements for AI as a medical device. Any organisation selling diagnostic AI in Europe should expect both MDR conformity assessment and AI Act high-risk system obligations, depending on the application.

For organisations building diagnostic AI tools, the regulatory strategy should begin at the architecture stage, not after the model is trained. This is something Bitcot’s healthcare development team addresses in the initial scoping phase of every engagement.

Predictive Analytics in Healthcare: From Reactive to Proactive Care

The most expensive moment in healthcare is the preventable one. A patient was readmitted within 30 days of discharge. A sepsis case was identified six hours too late. A diabetic complication that develops over months, while routine checks show nothing alarming. Predictive analytics is the application of machine learning to move healthcare from reactive to proactive to identify which patients are at elevated risk before the acute event occurs.

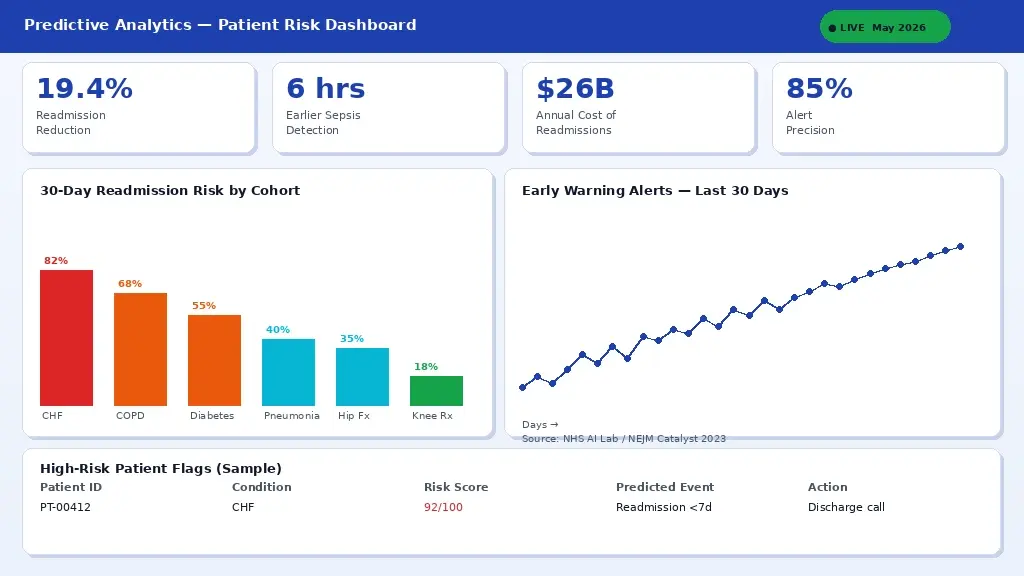

A predictive analytics dashboard surfacing early warning risk scores integrated into EHR workflows for clinical teams.

Hospital Readmission Prevention

Thirty-day hospital readmissions cost the US healthcare system approximately $26 billion annually, according to data from the Agency for Healthcare Research and Quality (AHRQ). CMS penalises hospitals financially for excess readmissions under the Hospital Readmissions Reduction Program (HRRP). Machine learning models trained on EHR data, including diagnosis codes, lab values, medication records, social determinants of health, and prior utilisation, can identify high-risk patients before discharge, enabling targeted intervention.

A 2023 NHS study published in NEJM Catalyst found that deploying a gradient boosting model for readmission risk stratification reduced 30-day readmissions by 19.4% when paired with a structured discharge intervention protocol. The model itself was not the intervention; it was what clinical staff did differently with the risk score in hand.

Sepsis Early Warning Systems

Sepsis kills approximately 270,000 patients annually in the United States (CDC, 2023). Every hour of delayed antibiotic treatment increases mortality risk by 7-10% according to findings from the Surviving Sepsis Campaign. Sepsis early warning systems using ML models applied to continuous vital sign data, lab results, and nursing notes have demonstrated the ability to flag sepsis risk 6-24 hours earlier than manual clinical recognition.

Epic’s Sepsis Prediction model, deployed at hundreds of health systems, has shown mixed real-world results depending on implementation, a reminder that the model is only one part of the system. Alert fatigue, workflow integration, and escalation protocols are equally critical.

Chronic Disease Management and Population Health

For conditions like type 2 diabetes, heart failure, and COPD, which account for the majority of healthcare expenditure in developed countries, predictive analytics enables population health management at a scale that is impossible with manual care coordination. Machine learning models can segment patient populations by risk level, predict which patients are likely to miss appointments, identify those whose medication adherence is declining based on pharmacy fill data, and surface outreach recommendations to care management teams.

This is where health data platforms become infrastructure. A predictive analytics capability is only as good as the quality, completeness, and freshness of the data feeding it. Organisations without a clean, integrated health data platform are building models on foundations that guarantee unreliable output.

Telemedicine and AI: Building the Virtual Care Platform That Actually Works

Telemedicine adoption grew by approximately 3,800% in the first three months of the COVID-19 pandemic, according to McKinsey & Company analysis. After the surge subsided, utilisation stabilised at a level roughly 38 times higher than pre-pandemic a permanent reset in how patients expect to access care. AI integration into telemedicine platforms is what separates a video call scheduling tool from a genuinely intelligent virtual care system.

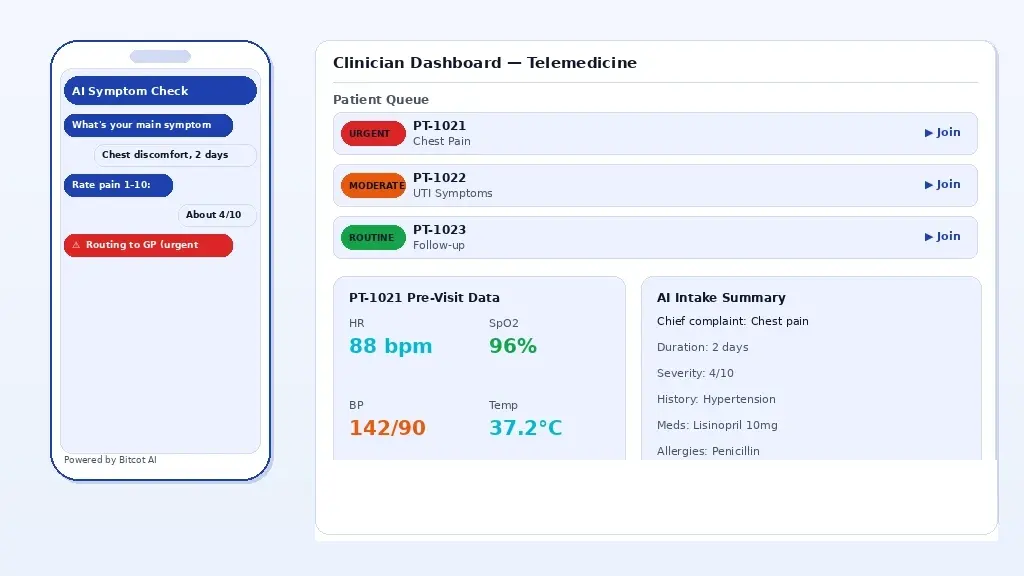

An AI-enhanced telemedicine app: automated pre-visit intake, symptom triage, and intelligent routing to the appropriate care level.

AI-Powered Symptom Triage and Intelligent Routing

The single biggest friction point in telemedicine is matching patient need to the right care resource. A patient presenting with chest pain needs an emergency referral, not a GP call back in three hours. A patient with a routine UTI does not need urgent care. AI-powered symptom checkers built on validated clinical decision trees and natural language processing can automate the triage layer, collecting structured symptom data before the visit and routing patients to the appropriate level of care.

Babylon Health, Ada Health, and Buoy Health are among the most studied symptom checker platforms. Independent evaluations published in The BMJ show significant variation in performance across these tools, reinforcing that symptom AI is not commodity software; clinical validation is a prerequisite for deployment.

Automated Pre-Visit Intake and Documentation

One of the highest-impact, lowest-risk applications of AI in telemedicine is automating the administrative burden around consultation. Natural language processing applied to pre-visit questionnaire responses can pre-populate structured clinical notes, surface relevant medical history from the EHR, and flag potential drug interactions or allergy conflicts before the clinician opens the consultation. Ambient clinical documentation tools such as Nuance DAX and Suki use real-time speech recognition and NLP to draft SOAP notes from the conversation itself, reducing documentation time by 50-70% in pilot studies.

What Bitcot Builds for Telemedicine Clients

Bitcot’s telemedicine development practice covers the full stack: secure video infrastructure built on WebRTC, patient-facing mobile applications on iOS and Android, clinician dashboards with EHR integration, e-prescribing workflows, asynchronous messaging, and AI-assisted documentation features. Every platform we ship includes end-to-end encryption, role-based access control, and audit logging for all third-party integrations that touch patient data.

Remote Patient Monitoring: AI at the Edge of Care

Remote patient monitoring (RPM) is the practice of collecting patient health data outside traditional clinical settings at home, in assisted living, during rehabilitation and transmitting it to clinical teams for review when AI is added to the layer that processes that data, the capability shifts from data collection to intelligent surveillance.

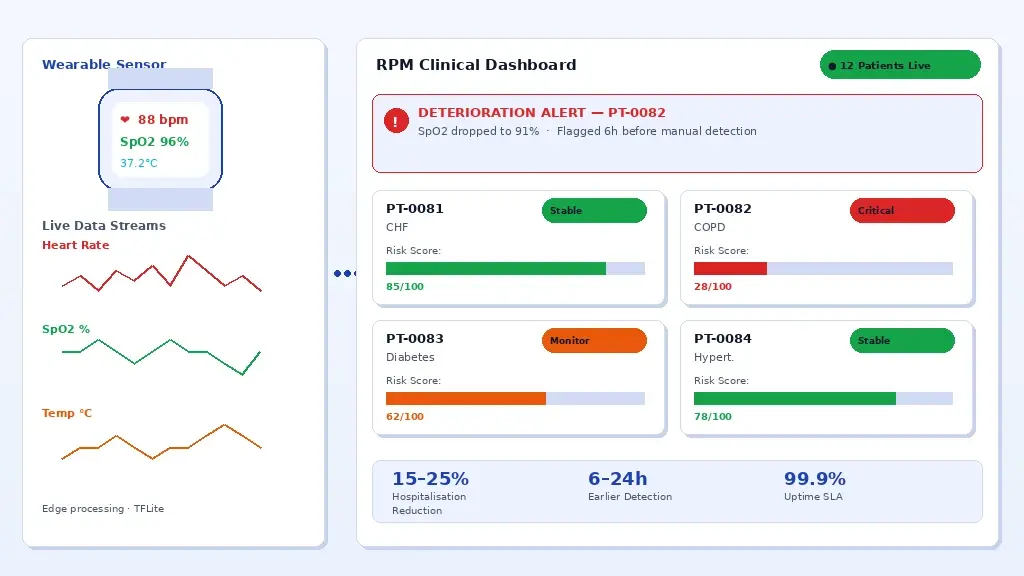

AI-powered RPM platform: wearable sensor data processed in real-time with machine learning to flag deterioration alerts to clinical teams.

From Data Collection to Intelligent Monitoring

A pulse oximeter sending readings every hour generates 24 data points per day. A continuous glucose monitor generates a data point every five minutes, 288 per day. A cardiac monitor with 256 Hz sampling generates over 22 million data points per day. No clinical team can manually review data at that density. Machine learning models applied to RPM data streams can distinguish noise from signal, identify trends that precede clinical deterioration, and generate alerts only when the data pattern warrants escalation.

Studies published in JMIR mHealth and uHealth have demonstrated that AI-assisted RPM platforms reduce the rate of preventable hospitalisation in heart failure patients by 15-25% compared to standard remote monitoring without intelligent alert logic. The mechanism is earlier detection and faster clinical response, not a different clinical protocol.

Architecture Decisions That Determine RPM Platform Reliability

RPM platforms that work in controlled pilots frequently fail at scale because the architecture was not designed for the data volumes, connectivity constraints, and alert reliability requirements of real-world deployment. Several decisions matter most.

Edge processing reduces latency and network dependency. For patients in rural areas with unreliable connectivity, a monitoring device that requires a constant internet connection to process data is not suitable. Models that run inference on a device, a growing capability enabled by efficient ML frameworks like TensorFlow Lite and Apple’s Core ML, provide meaningful degraded functionality when connectivity is intermittent.

Alert fatigue is the operational failure mode of poorly calibrated RPM AI. A system that pages clinical staff twenty times a day with non-urgent alerts will be ignored within weeks. Alert threshold design and model calibration are as important as model accuracy and require input from the clinical team that will act on the alerts.

Data provenance and audit trails are not optional in healthcare. Under HIPAA, covered entities are required to maintain tamper-evident logs of every data access and disclosure event, and any RPM platform handling patient data operates within that regulatory environment. This is also a prerequisite for reimbursement claims under CMS RPM billing codes.

Mental Health AI: What the Evidence Shows and Where the Risks Lie

Mental health is the healthcare domain where AI adoption is fastest, the evidence base is most contested, and the ethical stakes are highest. Over 10,000 mental health apps exist in the Apple App Store and Google Play Store. The majority have no published clinical evidence. A small number have been tested in randomised controlled trials. The gap between marketing claims and clinical evidence is larger in mental health technology than in almost any other area of healthcare AI.

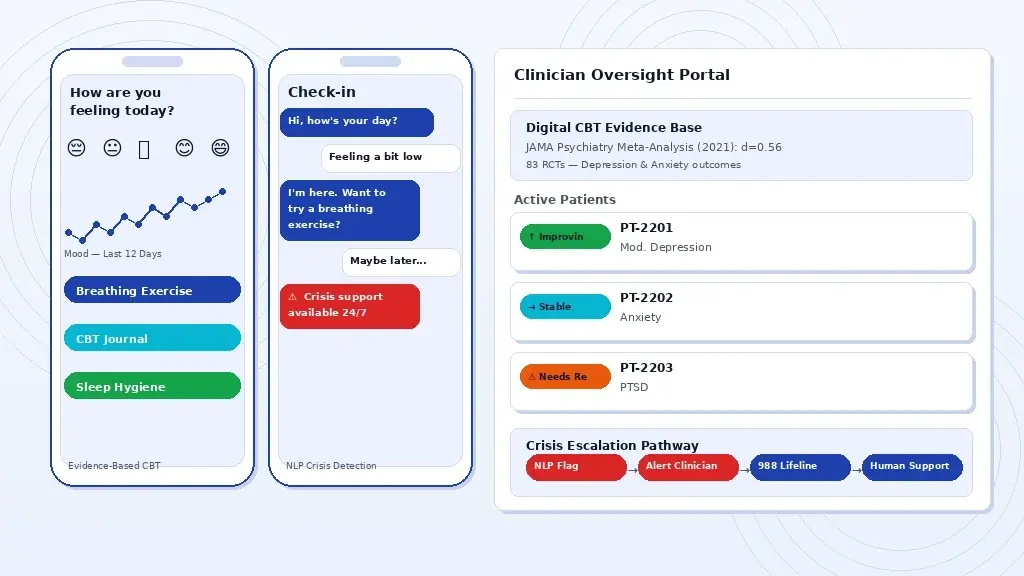

A clinical-grade mental health app: AI-driven CBT modules, mood analytics, and crisis escalation pathways integrated with clinician oversight.

What the Clinical Evidence Actually Supports

The strongest clinical evidence for digital mental health tools comes from Cognitive Behavioural Therapy (CBT) applications. A 2021 meta-analysis published in JAMA Psychiatry reviewed 83 randomised controlled trials of digital CBT interventions and found a pooled effect size of d=0.56 for depression symptoms, a clinically meaningful result comparable to face-to-face CBT for mild to moderate presentations. The evidence is weaker for anxiety, PTSD, and eating disorders, and largely absent for psychosis and bipolar disorder in digital-only formats.

NLP-powered conversational agents such as Woebot and Wysa have been tested in several peer-reviewed trials. A 2017 study in the Journal of Medical Internet Research found Woebot significantly reduced symptoms of depression and anxiety over two weeks in college students. These results are encouraging but should be contextualised: two-week trials with student populations do not generalise to clinical populations with severe mental illness.

The Safety Architecture Every Mental Health App Requires

A mental health app that is not designed to handle a user in crisis is not a mental health app; it is a liability. Every clinical-grade mental health application must include a crisis detection layer, clear escalation pathways to human support, and integration with crisis resources, including the 988 Suicide and Crisis Lifeline (US) and equivalent services in other jurisdictions.

NLP-based crisis detection is an active research area, but current models have false negative rates that make them unsuitable as a sole safety mechanism. The architecture should treat NLP crisis signals as escalation triggers, prompting human review, not as a replacement for human clinical judgment. Safe messaging guidelines developed by the Suicide Prevention Resource Centre and the American Foundation for Suicide Prevention should be embedded in content architecture from day one.

How Bitcot Approaches Mental Health App Development

When Bitcot builds mental health applications for clients, our approach starts with clinical advisory input, not feature lists. We work with licensed clinicians to define the therapeutic scope, evidence base, and safety architecture before a line of code is written. Every application includes: encryption at rest and in transit, multi-factor authentication, session data controls, clinician oversight dashboards where the platform involves supervised care delivery, and crisis escalation pathways with platform content policies and regulatory requirements in the target market.

AI in Clinic Management: Reducing Administrative Burden Without Reducing Care Quality

Administrative costs account for approximately 34.2% of total healthcare expenditure in the United States, according to a study published in the New England Journal of Medicine. Billing, scheduling, prior authorisations, documentation, referral management, and compliance reporting consume clinical staff time that could be directed at patients. AI applied to administrative workflows does not solve the clinical problems in healthcare, but it removes the non-clinical overhead that is slowing down the people trying to solve them.

Clinic management software with AI scheduling optimisation, automated billing alerts, and real-time patient flow analytics.

Intelligent Appointment Scheduling and Demand Forecasting

Appointment no-shows cost the US healthcare system an estimated $150 billion per year (Annals of Family Medicine). Machine learning models trained on historical appointment data, patient demographics, appointment type, and communication engagement can predict individual no-show probability with reasonable accuracy, enabling proactive overbooking decisions, targeted reminder campaigns, and waitlist management. Studies from academic medical centres, including Stanford Medicine and UCSF, have reported 20-35% reductions in no-show rates following AI-assisted scheduling interventions.

Revenue Cycle Automation and Claim Accuracy

Medical billing errors are estimated to cause $125 billion in claim denials annually in the US (Change Healthcare). Natural language processing applied to clinical documentation can assist with accurate ICD-10 and CPT coding, flag incomplete documentation before claim submission, and identify coding patterns that generate unnecessary denials. AI-assisted prior authorisation tools are increasingly available from vendors, including Olive and Cohere Health, reducing the average PA turnaround from 72 hours to under 4 in some implementations.

Patient Communication Automation

Appointment reminders, post-visit follow-up surveys, medication adherence nudges, preventive care outreach, and insurance benefit notifications are all communication workflows that can be automated using AI-driven patient engagement platforms without reducing the quality of the patient relationship. The key design principle is appropriate channel selection: SMS for time-sensitive reminders, email for detailed post-visit information, and portal messages for clinical follow-up requiring documentation.

What It Takes to Build Production-Grade Healthcare AI Software

There is a substantial gap between a healthcare AI demo and a healthcare AI system that runs reliably in a clinical environment, stays within applicable regulations, and measurably improves patient or operational outcomes. Having built healthcare software across telemedicine, RPM, EHR integration, patient portals, mental health, and diagnostic support, Bitcot’s team has identified the decisions that separate systems that work from systems that do not.

Start With the Clinical Workflow, Not the Technology

The most common failure mode in healthcare AI development is beginning with a technology capability and searching for a clinical application. The correct approach is the reverse: begin with the clinical problem, map the existing workflow in detail, identify where AI adds signal rather than noise, and build the minimum intervention that actually changes the clinical outcome. This requires clinical co-design, not just clinical approval at the end of development.

Curate Your Training Data as Carefully as Your Model Architecture

In healthcare AI, data quality is the dominant variable. A well-architected model trained on biased, incomplete, or incorrectly labelled data will produce unreliable output regardless of the sophistication of the architecture. Data curation in healthcare requires clinical annotation expertise, diversity auditing across age, sex, ethnicity, and socioeconomic status, and longitudinal validation that checks model performance as the real-world data distribution shifts over time.

Design Explainability In, Not Retrospectively

Clinicians cannot act responsibly on an AI recommendation that they cannot explain to a patient or defend in documentation. Interpretability tools, including SHAP (Shapley Additive Explanations), LIME, and attention visualisation for image-based models, should be part of the model development pipeline, not a post-hoc analysis. FDA guidance increasingly references explainability as an expectation for clinical AI, and the EU AI Act mandates transparency obligations for high-risk AI systems in healthcare.

Plan for Model Drift Before Deployment

Healthcare data distributions change. Patient populations shift. Clinical practices evolve. New medications are introduced. A model that performs accurately at deployment may degrade silently over months without monitoring. A production healthcare AI system requires a model monitoring pipeline that tracks performance metrics against ground truth labels, triggers review when drift exceeds defined thresholds, and provides a documented retraining and revalidation process.

Conclusion

AI in healthcare is no longer a future-state conversation. It is running in radiology departments, mental health apps, remote monitoring platforms, and clinic management systems today, and the gap between organisations that have built it well and those that have not is already visible in patient outcomes and operational costs.

The evidence is clear on where AI delivers and where deployments fail. Diagnostic imaging at specialist accuracy, readmission prediction, and remote monitoring that catches deterioration hours earlier are all achievable but only when training data is curated, clinical workflows are co-designed, and regulatory strategy is treated as an architecture decision from day one, not a final-step checkbox.

Building production-grade healthcare AI is a workflow problem, a data quality problem, and a clinical trust problem solved in that order. Bitcot’s healthcare engineering team works across that entire journey, whether you are building a telemedicine platform, integrating predictive analytics into an EHR workflow, or launching a remote patient monitoring product. Every engagement starts with the clinical problem, not the technology stack. Identify where manual effort is highest, or data is going unreviewed in your operations that is where AI delivers the clearest return. Bring that problem to the Bitcot team, and we will scope what a production-ready solution looks like for your specific use case.

Frequently Asked Questions (FAQs)

What is healthcare AI?

Healthcare AI refers to the use of machine learning algorithms, natural language processing, and predictive analytics to assist clinicians in diagnosing diseases, managing patient data, personalizing treatment plans, and automating administrative workflows.

How accurate is AI in medical diagnosis?

Studies published in journals such as Nature Medicine show AI models matching or exceeding specialist-level accuracy in specific imaging tasks such as diabetic retinopathy detection and skin cancer classification, though performance varies significantly by condition and dataset quality.

What does Bitcot build for healthcare clients?

Bitcot builds healthcare applications, including telemedicine platforms, patient portals, remote patient monitoring systems, mental health apps, EHR/EMR integrations, and AI-powered diagnostic tools for clinics, hospitals, and digital health startups.

Is AI secure for healthcare applications?

AI systems in healthcare must be designed with strong security and privacy protections, including end-to-end encryption, role-based access controls, audit logging, and secure data management practices. Healthcare organizations should ensure AI platforms align with internal governance policies and industry security standards before deployment.