Key Takeaways:

- Nearly two-thirds of enterprises are still stuck in the AI experimentation or piloting phase – the bottleneck is infrastructure, not the technology

- The highest-ROI automation use cases are already deployed across SaaS, FinTech, healthcare, and manufacturing

- Data readiness and architecture validation must come before any AI deployment decision

- Hyperautomation and agentic AI are no longer emerging – they are active competitive advantages in 2026

- The cost of delay is not neutral; every quarter without AI-augmented operations widens the performance gap against competitors who are already running these systems

Your competitors are not waiting for AI to mature.

They are already using it to cut operational costs by up to 30%, eliminate manual bottlenecks, and accelerate decision-making at a scale your current infrastructure was never designed to support.

That gap is widening every quarter.

The question is no longer whether AI automation belongs in your organization. The question is whether your architecture, data pipelines, and workflows are actually ready to absorb it – or whether you are about to invest $200K into a system built on a foundation that cannot hold.

This guide is written for US-based technical leaders and enterprise decision-makers who need more than a list of buzzwords. You need a clear picture of where AI automation creates measurable ROI, what real implementation looks like, and how to avoid the most expensive mistake in enterprise AI: deploying before you are infrastructure-ready.

Why Most Enterprise AI Automation Initiatives Stall in Year One

The hype cycle around AI automation has created a dangerous pattern.

Leadership approves budget. A vendor pitches a shiny solution. Integration begins. Then reality hits – fragmented data sources, legacy APIs that cannot communicate, governance gaps, and no clear ownership of the AI pipeline.

According to McKinsey’s 2025 State of AI report, nearly two-thirds of organizations are still in the experimentation or piloting phase, and only 39% report any measurable enterprise-level business impact from AI. The culprit is rarely the AI model itself. It is the infrastructure surrounding it.

“More than any transformation before it, this generation of AI is radically changing every layer of the tech stack, and we are changing with it.”

– Satya Nadella, CEO, Microsoft

Most organizations jump straight to deployment when they should be investing in AI workflow automation readiness first – the unglamorous but critical work of mapping processes, unifying data, and establishing governance before a single model goes live. Organizations that treat this as a digital transformation initiative rather than a standalone IT project consistently outperform those that do not.

The real blockers look like this:

- Data living in siloed ERP, CRM, and legacy systems with no unified schema – the absence of a data lakehouse architecture is the most common root cause

- Engineering teams stretched thin between product delivery and maintenance

- No ML Ops foundation to monitor, retrain, or govern deployed models

- Security and compliance frameworks that were not designed with AI pipelines in mind

- Vendor solutions that solve one narrow problem and create five new integration dependencies

- Hyperautomation strategies initiated without the process maturity to support them

If any of these feel familiar, you are not behind – you are simply being honest about where most enterprises actually are in 2026.

The good news is that AI automation does not require perfection. It requires sequencing. The practical starting point is identifying where your data is cleanest, your processes are most documented, and your ROI potential is highest – then building outward from there.

The organizations winning with AI are not the ones who deployed the most tools. They are the ones who identified the right use cases for their current data maturity level, built modular architectures, and created feedback loops that improve over time.

Also Read: The AI-Native Data Stack for 2026: Building Systems That Think and Learn

Here is exactly what that looks like in practice.

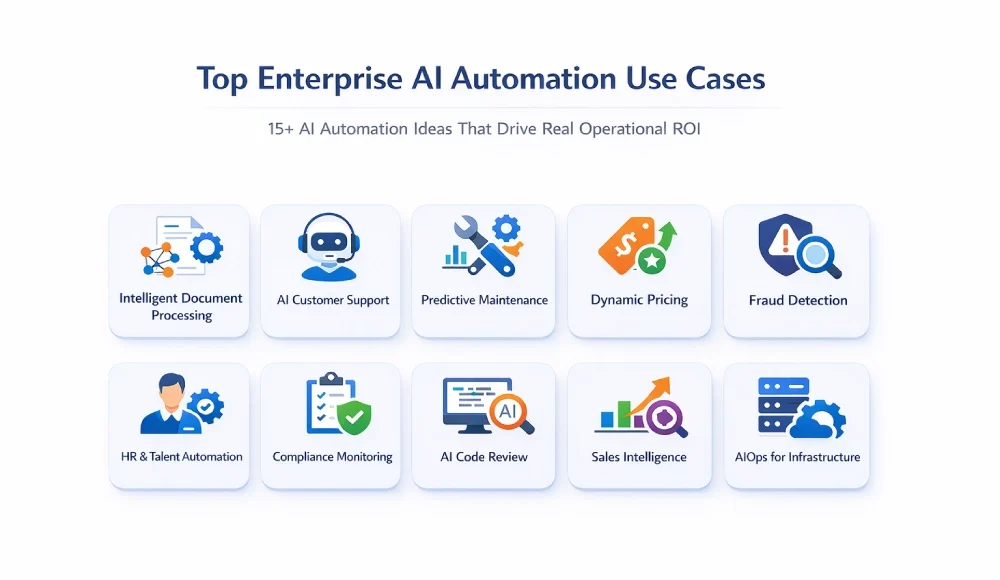

15+ AI Automation Ideas That Drive Real Operational ROI

These are not hypothetical. Each use case below has been implemented across enterprise environments in SaaS, healthcare, FinTech, eCommerce, and manufacturing. Complexity and cost of implementation vary – and that context matters.

Before evaluating any of these, audit which use cases align with your current data maturity – the ones that do will deliver returns fastest. You can explore how these translate to real production environments across Bitcot’s AI development services capabilities.

1. Intelligent Document Processing and Data Extraction

Manual document handling is one of the highest-cost, lowest-value activities in any operation-heavy organization.

AI-powered document processing uses OCR combined with large language models to extract, classify, and route data from invoices, contracts, intake forms, compliance documents, and insurance claims – with accuracy rates now exceeding 95% on structured documents. This is intelligent process automation (IPA) at its most immediately deployable: high-volume, structured, and directly measurable.

Real use case: A FinTech lending platform reduced loan processing time from 4 days to under 6 hours by automating document intake, identity verification cross-referencing, and underwriting data extraction using a custom LLM pipeline integrated into their Salesforce workflow.

The ROI is direct: fewer FTEs processing paper, faster cycle times, lower error rates, and audit-ready data trails.

2. AI-Powered Customer Support and Triage

This is not about replacing your support team with a chatbot. It is about giving your team a force multiplier.

Modern AI triage systems classify incoming tickets by urgency, route them to the correct team, suggest resolution paths based on historical data, and handle Tier 1 queries fully autonomously – without a human in the loop. The most advanced implementations use agentic AI architectures where autonomous agents handle multi-step resolution workflows, escalate based on sentiment signals, and learn continuously from resolved cases.

These systems increasingly rely on multi-agent orchestration – where specialized agents hand off tasks between each other in real time – rather than a single monolithic model trying to do everything. For a deeper technical breakdown of how LLM agents work in enterprise environments, the architecture considerations are worth reviewing before selecting an implementation approach.

Enterprise-grade implementations connect to your CRM, knowledge base, and product telemetry to give agents context-rich summaries before they ever read a ticket.

Real use case: A B2B SaaS platform serving mid-market HR teams deployed an AI triage layer that resolved 42% of support tickets without human escalation. Average handle time on escalated tickets dropped by 31% because agents received pre-populated resolution suggestions.

Also Read: How to Build an AI Chatbot for Your Website to Automate Support

3. Predictive Maintenance for Manufacturing and Infrastructure

Reactive maintenance is budget destruction at scale. And most organizations do not realize how much it is costing them until they model it.

AI models trained on IoT sensor data, equipment logs, and historical failure patterns can predict component failure windows with enough lead time to schedule maintenance before production disruption occurs. Edge AI plays a critical role here – processing sensor data directly on-device at the factory floor level, reducing latency and enabling real-time decisions without relying on cloud round-trips.

Organizations operating at Industry 4.0 maturity are increasingly pairing this with digital twin technology – creating virtual replicas of physical assets to simulate failure scenarios and test maintenance strategies without touching production systems.

Real use case: A mid-sized manufacturer running Industry 4.0 upgrades reduced unplanned downtime by 27% in the first year after deploying predictive maintenance AI across three production lines. The model was retrained quarterly as new sensor data accumulated, improving accuracy over time.

For any operation where downtime costs exceed $50K per hour, this use case alone justifies a significant AI investment. The same infrastructure thinking that powers predictive maintenance also unlocks revenue-side automation – which brings us to the next use case.

4. Dynamic Pricing and Revenue Optimization

Static pricing is a revenue ceiling.

AI-driven dynamic pricing models analyze demand signals, competitor pricing, inventory levels, customer segment behavior, and seasonality in real time – then adjust pricing to maximize margin without sacrificing conversion.

This is not exclusive to eCommerce. SaaS platforms use it for seat-based pricing optimization. Marketplaces use it to balance supply-demand economics. Healthcare networks use it for resource allocation pricing.

Real use case: A DTC eCommerce brand running headless commerce on a composable stack deployed a dynamic pricing engine that increased gross margin by 18% in Q1 without a single additional marketing dollar spent.

5. AI-Augmented Fraud Detection and Risk Scoring

Traditional rules-based fraud detection is brittle. It creates false positives that frustrate legitimate customers and misses novel fraud patterns that fall outside predefined rules.

Machine learning fraud models learn continuously from transaction data, flagging anomalies in real time across millions of signals – device fingerprints, behavioral biometrics, velocity patterns, and network graphs.

Real use case: One of the largest US-based FinTech payments platforms processing $2B+ annually replaced its legacy rules engine with an ML fraud detection system. False positive rates dropped by 40%, saving an estimated $3.2M annually in manual review costs and customer churn from declined legitimate transactions.

6. Automated Financial Close and Reconciliation

Month-end financial close is one of the most time-consuming, error-prone processes in any finance organization.

Workflow automation handles transaction matching, anomaly detection, variance explanation, and reconciliation across ERP systems, bank feeds, and subsidiary ledgers – compressing close cycles from 10 days to 3. When combined with robotic process automation (RPA) for high-volume transactional tasks, the speed and accuracy gains compound significantly.

Understanding where AI ends and RPA begins matters here – the most effective financial close implementations use both in tandem, with RPA handling rule-based transaction processing and AI handling exception detection and variance analysis.

Real use case: A PE-backed SaaS company with operations across four business units automated 78% of its reconciliation workflow. The finance team redirected bandwidth toward forecasting and investor reporting rather than manual data validation. For organizations looking specifically at invoice processing and payment workflows, Bitcot’s guide to accounts payable automation covers the end-to-end implementation in detail.

Also Read: How to Transform Accounts Payable with Agentic Automation and Power Automate

7. Intelligent HR and Talent Operations

From resume screening to onboarding workflows to attrition prediction, AI is restructuring how talent organizations operate.

Attrition prediction models analyze engagement signals, performance patterns, tenure milestones, and compensation benchmarking to flag flight-risk employees before they resign – giving HR and managers a window to act.

Real use case: A healthcare network with 12,000 employees deployed an attrition prediction model that identified high-risk nursing staff with 74% accuracy 90 days before resignation. Proactive retention interventions reduced nursing turnover by 19% in 12 months, saving an estimated $6.8M in recruitment and training costs.

For a deeper look at how AI is reshaping healthcare operations specifically, Bitcot’s analysis of AI automation in healthcare covers the operational and compliance dimensions in detail.

Talent automation and supply chain automation share the same dependency: clean, connected data. That connection matters for the next use case.

8. Supply Chain Demand Forecasting

Legacy supply chain forecasting relies on historical averages and spreadsheet models that cannot process external signals fast enough to be useful.

AI demand forecasting ingests POS data, macroeconomic indicators, social sentiment, weather patterns, and supplier lead time variability to produce probabilistic demand curves – not single-point forecasts.

AI-augmented decision-making sits at the center of this – the goal is not just faster forecasting but better judgment, where AI surfaces options and confidence intervals that human planners act on rather than replacing planner judgment entirely. Process mining tools increasingly sit upstream of this, identifying exactly where supply chain workflows break down before any AI model is deployed on top of them.

Real use case: A consumer goods manufacturer reduced inventory carrying costs by 22% and stockout events by 34% after replacing their ERP-native forecasting module with an AI demand planning layer that updated forecasts daily.

9. Automated Compliance Monitoring and Reporting

For organizations operating under SOC 2, HIPAA, GDPR, or financial regulatory frameworks, compliance is a continuous operational burden.

AI compliance systems monitor system logs, access patterns, data flows, and policy adherence in real time – generating audit-ready reports and flagging violations before they become regulatory events. The most mature implementations operate under a defined AI governance framework – a structured set of policies governing how models are trained, monitored, audited, and retired – rather than relying on ad-hoc human oversight of individual model outputs.

Real use case: A health-tech SaaS platform reduced compliance audit preparation time from 6 weeks to under 5 days by deploying an automated compliance monitoring tool that maintained continuous evidence collection across AWS infrastructure.

The same engineering discipline that makes compliance automation reliable is exactly what accelerates developer output – which the next use case addresses directly.

10. AI-Powered Code Review and Developer Productivity

Engineering velocity is a competitive moat. Anything that accelerates it without introducing technical debt compounds over time.

AI code review tools analyze pull requests for security vulnerabilities, logic errors, style inconsistencies, and test coverage gaps – at a fraction of the time a senior engineer review requires.

Beyond code review, generative AI integration into development workflows is reducing time-to-feature for experienced engineering teams by 20-35%, according to GitHub’s 2025 Octoverse research. Low-code automation plays a supporting role in this stack as well – enabling non-engineering teams to build and manage their own workflow automations, reducing the dependency on engineering bandwidth for internal tooling requests.

Real use case: A Series B SaaS company integrated AI-assisted code review and documentation generation into their CI/CD pipeline. Senior engineers reported spending 40% less time on review cycles and more time on architecture decisions.

Also Read: How AI Tools Are Rewriting Development Workflows in 2026

11. Marketing Personalization at Scale

Generic marketing is noise. Personalized marketing drives revenue.

AI personalization engines analyze behavioral data, purchase history, content engagement, and CRM attributes to deliver individualized content, product recommendations, and email sequences – at a scale no human team can replicate manually.

Real use case: A fitness platform with 800,000 active users deployed an AI personalization layer across email, in-app messaging, and push notifications. Engagement rates increased by 47%. Monthly recurring revenue from personalized upsell sequences grew by 22% in two quarters.

Personalization at the marketing layer works best when it feeds into a unified knowledge system internally – which is exactly what the next use case enables.

12. Conversational AI for Internal Knowledge Management

Enterprise knowledge is trapped in Confluence pages, email threads, Slack channels, and the heads of people who might leave next quarter.

RAG-powered (Retrieval-Augmented Generation) internal AI assistants trained on company documentation, product specs, SOPs, and institutional knowledge give employees instant, accurate answers – reducing context-switching, onboarding time, and dependency on tribal knowledge.

Unlike basic chatbots, RAG-powered systems pull from live internal sources, meaning answers stay accurate as documentation evolves. Bitcot’s generative AI integration services cover the full architecture behind building these RAG-enabled co-pilots – including vector database selection, document chunking strategy, and governance for sensitive internal data.

Real use case: A 600-person logistics company deployed an internal AI assistant connected to their operations wiki, HR policies, and compliance documentation. New employee ramp time decreased by 38%. Support requests to internal IT and HR dropped by 29%.

13. Automated Sales Intelligence and Lead Scoring

B2B sales cycles are long. Every hour your team spends on unqualified leads is a cost center, not a revenue driver.

AI lead scoring models synthesize CRM data, firmographic signals, behavioral intent data, and historical win/loss patterns to rank leads by conversion probability – allowing sales teams to focus on accounts that are actually ready to buy.

Real use case: A B2B marketplace serving enterprise buyers integrated AI lead scoring into their Salesforce instance. Sales cycle length decreased by 24%. Win rates on accounts in the top two scoring tiers increased by 31%.

Also Read: Building an AI Chatbot for Website Leads with CRM Integration

14. AI-Driven Quality Assurance in Production Environments

Manual QA cannot scale with modern software delivery cadences. AI-powered QA systems generate test cases, identify regression risks from code diffs, and prioritize test execution based on change impact analysis.

For manufacturers, computer vision QA systems inspect products on the line faster and more consistently than human inspectors – with defect detection rates exceeding 99% in controlled environments.

Real use case: An electronics manufacturer deployed computer vision QA across two production lines, replacing manual visual inspection for circuit board defects. Defect escape rate dropped by 91%. Cost per inspection decreased by 67%.

Quality assurance and infrastructure management are closely linked – when QA catches less, infrastructure absorbs more. That is the problem the next use case directly resolves.

15. AI Operations (AIOps) for Infrastructure Management

Cloud infrastructure that manages itself is no longer aspirational. AIOps platforms correlate telemetry data across logs, metrics, traces, and events to detect anomalies, predict outages, and automate remediation – often before users are impacted. At the most mature implementations, this becomes a network of autonomous AI workflows – systems that self-diagnose, self-heal, and self-optimize with minimal human intervention. For engineering teams evaluating the architectural approach behind this, Bitcot’s work on AI-native product development is relevant context for understanding how infrastructure-level AI differs from feature-level AI.

For organizations running complex distributed systems, AIOps reduces mean time to detect (MTTD) and mean time to resolve (MTTR) dramatically.

Real use case: A SaaS platform processing 50M+ daily transactions deployed AIOps tooling across their AWS infrastructure. MTTD dropped from 47 minutes to under 4 minutes. MTTR decreased by 58%. On-call engineer alert fatigue – a real retention issue – was cut by 60%.

16. AI-Assisted Customer Onboarding and Lifecycle Management

Customer onboarding failure is silent revenue destruction. Customers who do not reach value quickly churn before you can measure them.

AI onboarding systems personalize the activation journey based on role, company size, use case, and behavioral signals – triggering contextual nudges, in-app guidance, and CSM alerts when customers fall off the activation path.

Real use case: A vertical SaaS company for the healthcare market reduced time-to-value from 45 days to 18 days after deploying an AI-driven onboarding orchestration layer. 90-day retention improved by 26%.

Sixteen use cases. Sixteen areas where competitors are already generating measurable returns. The question that follows naturally is: what does delay actually cost?

The Cost of Waiting Is Not Neutral

“We will revisit AI automation next quarter” is a decision. It is just a decision being made unconsciously.

Every quarter your competitors run AI-augmented operations and you do not, the gap compounds. Their models accumulate training data. Their teams build AI-native workflows. Their infrastructure gets leaner. Their margins expand while yours hold flat.

The concrete costs of delay look like this:

- Manual process overhead: If your operations team is spending 30% of capacity on tasks that AI can handle, that is not a staffing cost – it is an opportunity cost disguised as payroll.

- Revenue leakage: Dynamic pricing, AI-powered upsell sequencing, and lead scoring improvements typically generate 15-30% revenue lift. Every quarter without them is revenue left on the table.

- Infrastructure inefficiency: Organizations without AIOps or cloud automation typically overprovision by 25-40%. That is direct cash burn.

- Competitive disadvantage: AI-native competitors can move faster, personalize deeper, and operate leaner. The longer you wait, the harder it becomes to close that gap.

McKinsey’s 2025 State of AI research is direct on the scaling problem: most companies are still in pilot mode, unable to convert AI experimentation into enterprise-level impact – and American enterprises that delay AI adoption face a compounding productivity gap that grows harder to close with each passing quarter.

The practical response to this is not a 12-month planning cycle. It is identifying one or two use cases where your data is already clean, scoping tightly, and generating a proof point that earns internal momentum for broader investment.

Bitcot’s breakdown of why companies are accelerating AI-based automation adoption in 2026 outlines exactly what that cost curve looks like across mid-market and enterprise segments. PwC’s 2025 US Responsible AI Survey of 310 US business leaders reinforces the urgency: nearly 60% of executives say Responsible AI practices directly boost ROI and operational efficiency.

And according to Gartner, by end of 2026, 40% of enterprise applications will incorporate task-specific AI agents – up from less than 5% in 2025. The infrastructure window is closing faster than most planning cycles account for.

AI-Ready vs. AI-Hype: What Separates Real ROI from Expensive Experiments

Not every AI initiative delivers results. The difference comes down to one thing: infrastructure discipline before deployment.

Most organizations cannot tell which column they are in until a deployment fails. That is the problem this table is designed to fix.

| Dimension | AI-Hype Implementation | AI-Ready Implementation |

| Data foundation | Fragmented, siloed, inconsistent | Unified data lakehouse, pipeline-ready |

| Architecture | Point solutions bolted onto legacy | Modular, API-first, cloud-native |

| Governance | Reactive, post-deployment | AI governance framework built into design |

| Vendor strategy | Single-vendor lock-in | Composite AI with best-of-breed integration |

| ML Ops | None or ad-hoc | Monitoring, retraining, drift detection |

| Security posture | Afterthought | Embedded in data pipeline design |

| ROI measurement | Vague, qualitative | Defined KPIs, measured baselines |

| Team alignment | IT-driven, business-excluded | Cross-functional ownership |

Most failed AI initiatives look like the left column. The organizations achieving 3-5x ROI on AI investment look like the right column. A practical first step is to run this table as an internal audit – score your current state against each row honestly, and the gaps will tell you where architecture investment is most urgent.

If you want a practical look at which platforms enterprise teams across the United States are using to reach the right column, Bitcot’s breakdown of top AI automation tools for Fortune 500 companies maps the current tooling landscape clearly.

The difference is not budget. It is sequencing and architecture-first thinking. If your organization is closer to the left column today, the next section explains what the path forward looks like.

How Bitcot Supports Enterprise AI Automation Implementation

If the blockers outlined in this article – fragmented data, governance gaps, architecture fragility – sound familiar, that is exactly the starting point we work from.

We have seen what happens when organizations rush AI deployment without the right foundation. We have also seen what is possible when implementation is done with strategic rigor.

Bitcot is a trusted AI automation agency working with scaling companies and enterprise modernization teams at the intersection of architecture readiness, AI pipeline design, and operational transformation. Our engagements are not proof-of-concept theater. They are engineered for production, governance, and measurable ROI. You can explore our automation project portfolio and case studies to see how this translates across industries.

“The biggest mistake enterprises make with AI automation isn’t choosing the wrong tool. It’s treating implementation as an IT project instead of a business transformation. The ROI comes from execution, not experimentation.”

– Raj Sanghvi, Founder and CEO, Bitcot

Here is how we approach AI automation implementation:

Discovery and Readiness Assessment Before we recommend a single automation use case, we audit your data infrastructure, integration landscape, compliance environment, and organizational readiness. The goal is to identify the highest-ROI automation opportunities within your actual current state – not a theoretical future state.

Architecture Validation AI automation that fails in production almost always has an architecture problem, not a model problem. We validate that your data pipelines, API layer, cloud infrastructure, and security posture can support the automation layer you are building before a line of production code is written.

Senior-Only Engineering Execution Every engagement at Bitcot is staffed with senior engineers. No junior developers learning on your timeline. No offshore handoffs mid-project. The teams we deploy have built AI automation systems for SaaS platforms, FinTech companies, healthcare networks, and marketplace businesses operating at scale.

Governance and Compliance Integration For regulated industries, AI governance is not optional. We embed compliance requirements into pipeline design – not as a retrofit after deployment. Whether your constraints are HIPAA, SOC 2, GDPR, or financial regulatory frameworks, governance is part of the architecture conversation from day one.

Speed-to-Value Execution Enterprise does not mean slow. We structure engagements with 30/60/90-day value milestones so stakeholders see measurable progress against defined KPIs – not just a roadmap that stretches into the horizon.

If your organization is evaluating AI automation investment in 2026, the conversation we recommend starting is not about which AI tool to buy. It is about whether your infrastructure and data foundation are ready to deliver the ROI you are expecting.

The Organizations That Win With AI in 2026 Start With Architecture

AI automation is not a technology problem. It is an architecture and sequencing problem.

The ideas outlined in this article – from intelligent document processing to AIOps to AI-driven onboarding – are all within reach for organizations operating with the right foundation. The gap between organizations extracting real ROI from AI and those producing expensive pilots is not budget. It is the discipline to build infrastructure-first, govern from the start, and sequence use cases against data and organizational readiness.

McKinsey’s finding that only 39% of organizations are generating measurable business impact from AI is not a technology problem – it is a sequencing and foundation problem. The path forward is clear: assess your data readiness, identify your highest-ROI use cases, validate your architecture, and build with governance from the start.

The leaders across the United States who will look back on 2026 as the year they pulled ahead are not the ones who waited for the technology to mature further. They are the ones who invested in the right foundation now, deployed the highest-ROI use cases first, and built AI capabilities that compound over time.

Your competitors are making that decision today.

Ready to Build AI Automation That Actually Scales?

If you are evaluating AI automation investment and want a clear picture of where your infrastructure stands, where your highest-ROI opportunities are, and what a realistic implementation roadmap looks like for your organization – we should talk.

Request a Technical Roadmap Audit with Bitcot’s senior architecture team.

No pitch. No generic slides. A structured assessment of your current state and a sequenced path to AI automation ROI.

Frequently Asked Questions (FAQs)

The questions below reflect what enterprise and scaling teams ask us most often – before committing to an AI automation program.

How do we determine which AI automation use cases to prioritize first?

Start with the intersection of data readiness and business impact – that intersection is where your fastest ROI lives. More specifically, prioritization should be driven by three factors: data readiness, process maturity, and ROI potential. Use cases where you have clean, structured, accessible data and a well-documented process will deliver faster ROI than use cases requiring significant data remediation. Process mining tools can accelerate this assessment by surfacing exactly where workflow inefficiencies are concentrated before any AI layer is introduced. A structured assessment of your automation landscape against business impact will produce a sequenced roadmap rather than a chaotic parallel launch.

What is a realistic TCO for enterprise AI automation over 3 to 5 years?

TCO varies significantly by scope, but enterprise AI automation initiatives in the $150K-$500K initial investment range typically account for model infrastructure costs, integration engineering, ML Ops tooling, data governance, and ongoing retraining. Organizations that underestimate ML Ops and governance costs in Year 2 and Year 3 are the ones that encounter budget surprises. A full TCO model should include infrastructure scaling, model maintenance, security monitoring, and team upskilling.

How long does a full AI automation implementation take?

For a focused, well-scoped use case with data readiness in place, initial deployment can happen in 8 to 16 weeks. Enterprise-wide automation programs spanning multiple functions typically operate on 12-to-24-month roadmaps with phased value delivery. Timeline compression is possible with senior teams and clear scope, but compressing architecture validation timelines creates downstream risk that shows up later – usually at the worst time.

How do we avoid vendor lock-in when selecting AI automation platforms?

Architect for portability from the start. This means API abstraction layers between your application logic and AI model providers, avoiding deep integration with proprietary data schemas, and selecting vendors with open standards compatibility. A composable AI stack gives you the ability to swap model providers as the landscape evolves – which it will, and faster than most vendor roadmaps acknowledge.

How do we ensure AI automation systems are secure and compliant?

The short answer is: build security and compliance into pipeline design from day one, not after deployment. This includes data lineage tracking, role-based access controls on model inputs and outputs, audit logging, encryption in transit and at rest, and model output monitoring for drift and bias. For regulated industries, compliance validation should occur before production deployment – not as a reactive audit exercise. Governance built in is always cheaper than governance retrofitted.

Can AI automation work alongside our existing internal engineering team?

Yes – and in the most effective implementations, it does. The model that works best for most enterprise teams is a blended approach where internal engineers own long-term product architecture and an external senior team accelerates AI automation design, builds the foundational infrastructure, and transfers knowledge systematically. This avoids the internal team becoming dependent on external vendors while still accelerating delivery timelines significantly.

What is the biggest risk to avoid in enterprise AI automation investment?

Deploying AI on top of a broken data foundation. AI models are only as good as the data they operate on. If your data is fragmented across legacy systems, lacks consistent schema, or has quality gaps, AI automation will amplify those problems – not fix them. Data readiness assessment before deployment is the highest-leverage risk mitigation step available to any organization. Start there, and the rest of the implementation becomes considerably more predictable.